Cross-Sectional Model Comparison: bayespecon vs spreg¶

Columbus example¶

This notebook compares equivalent cross-sectional models between bayespecon and spreg on a real example dataset from the PySAL/spreg ecosystem.

The dependent variable is HOVAL, with INC and CRIME used as explanatory variables.

Model mapping used:

SLX(bayespecon) vsspreg.OLS(slx_lags=1)SAR(bayespecon) vsspreg.GM_LagSEM(bayespecon) vsspreg.GM_ErrorSDM(bayespecon) vsspreg.GM_Lag(slx_lags=1, w_lags=2)SDEM(bayespecon) vsspreg.GM_Error(slx_lags=1)

Dataset: Columbus neighborhood data from libpysal.examples.

import geopandas as gpd

import libpysal

import numpy as np

import pandas as pd

import spreg

from IPython.display import display

from spreg.sputils import _sp_effects, spmultiplier

from bayespecon import SAR, SDEM, SDM, SEM, SLX

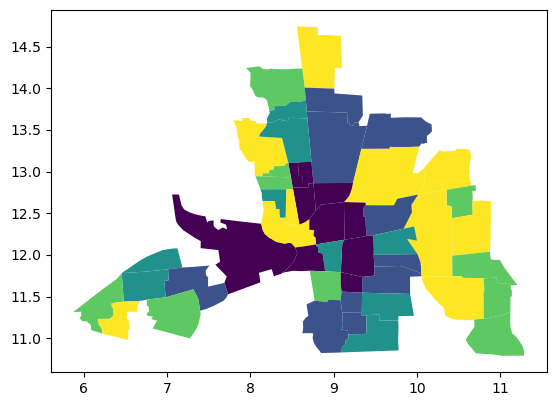

columbus = gpd.read_file(libpysal.examples.get_path("columbus.shp"))

columbus.plot("HOVAL", scheme="quantiles")

<Axes: >

w = libpysal.graph.Graph.build_contiguity(columbus).transform("r")

y = columbus.HOVAL.values

X = columbus[["INC", "CRIME"]]

spreg_results = {

"SLX": spreg.OLS(y, X, w=w, slx_lags=1, name_y="HOVAL", name_x=["INC", "CRIME"]),

"SAR": spreg.GM_Lag(y, X, w=w, name_y="HOVAL", name_x=["INC", "CRIME"]),

"SEM": spreg.GM_Error(y, X, w=w, name_y="HOVAL", name_x=["INC", "CRIME"]),

"SDM": spreg.GM_Lag(

y, X, w=w, slx_lags=1, w_lags=2, name_y="HOVAL", name_x=["INC", "CRIME"]

),

"SDEM": spreg.GM_Error(

y, X, w=w, slx_lags=1, name_y="HOVAL", name_x=["INC", "CRIME"]

),

}

spec = "HOVAL ~ 1 + INC + CRIME"

sample_kwargs = dict(draws=1000, tune=1000, chains=4, random_seed=42, progressbar=False)

bayes_models = {

"SLX": SLX(spec, data=columbus, W=w),

"SAR": SAR(spec, data=columbus, W=w),

"SEM": SEM(spec, data=columbus, W=w),

"SDM": SDM(spec, data=columbus, W=w),

"SDEM": SDEM(spec, data=columbus, W=w),

}

bayes_idata = {name: model.fit(**sample_kwargs) for name, model in bayes_models.items()}

GM_Lag

GM_Error

GM_Lag

GM_Error

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 19 seconds.

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [rho, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 16 seconds.

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [lam, beta, sigma]

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 14 seconds.

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [rho, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 20 seconds.

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [lam, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 1_000 tune and 1_000 draw iterations (4_000 + 4_000 draws total) took 19 seconds.

There were 5 divergences after tuning. Increase `target_accept` or reparameterize.

bayes_models["SAR"].summary()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 37.791 | 14.077 | 12.352 | 64.956 | 0.337 | 0.260 | 1742.0 | 2017.0 | 1.0 |

| INC | 0.562 | 0.528 | -0.432 | 1.544 | 0.012 | 0.009 | 1956.0 | 2047.0 | 1.0 |

| CRIME | -0.450 | 0.176 | -0.790 | -0.127 | 0.004 | 0.003 | 2015.0 | 2223.0 | 1.0 |

| rho | 0.216 | 0.163 | -0.087 | 0.513 | 0.003 | 0.003 | 2358.0 | 1957.0 | 1.0 |

| sigma | 14.893 | 1.507 | 12.071 | 17.617 | 0.030 | 0.026 | 2500.0 | 2142.0 | 1.0 |

Sampler adequacy for \(\rho\)¶

Before trusting the SAR posterior summary above, verify that the spatial parameter \(\rho\) has been sampled efficiently. Wolf, Anselin & Arribas-Bel (2018) [Wolf et al., 2018] show that \(\rho\) tends to mix slowly, so a chain that converges in mean can still under-cover the tails by 10–12 %.

from bayespecon import spatial_mcmc_diagnostic

spatial_mcmc_diagnostic(bayes_models["SAR"], emit_warnings=False).to_frame()

| ess_bulk | ess_tail | r_hat | mcse_mean | yield_pct | hpdi_drift_pct | adequate | |

|---|---|---|---|---|---|---|---|

| parameter | |||||||

| rho | 2357.684873 | 1956.972676 | 1.003056 | 0.003339 | 58.942122 | 3.318067 | True |

print(spreg_results["SAR"].summary)

REGRESSION RESULTS

------------------

SUMMARY OF OUTPUT: SPATIAL TWO STAGE LEAST SQUARES

------------------------------------------------------------------------------------

Data set : unknown

Weights matrix : unknown

Dependent Variable : HOVAL Number of Observations: 49

Mean dependent var : 38.4362 Number of Variables : 4

S.D. dependent var : 18.4661 Degrees of Freedom : 45

Pseudo R-squared : 0.2902

Spatial Pseudo R-squared: 0.3560

------------------------------------------------------------------------------------

Variable Coefficient Std.Error z-Statistic Probability

------------------------------------------------------------------------------------

CONSTANT 55.67226 20.24627 2.74975 0.00596

INC 0.69542 0.55487 1.25329 0.21010

CRIME -0.51907 0.19376 -2.67898 0.00738

W_HOVAL -0.23275 0.38231 -0.60878 0.54267

------------------------------------------------------------------------------------

Instrumented: W_HOVAL

Instruments: W_CRIME, W_INC

DIAGNOSTICS FOR SPATIAL DEPENDENCE

TEST DF VALUE PROB

Anselin-Kelejian Test 1 2.075 0.1497

SPATIAL LAG MODEL IMPACTS

Impacts computed using the 'simple' method.

Variable Direct Indirect Total

INC 0.6954 -0.1313 0.5641

CRIME -0.5191 0.0980 -0.4211

================================ END OF REPORT =====================================

def extract_spreg_params(model):

names = list(getattr(model, "name_x", []))

betas = np.asarray(model.betas).flatten()

if len(betas) == len(names) + 1 and type(model).__name__ == "GM_Lag":

names = names + ["rho"]

if len(names) != len(betas):

names = [f"param_{i}" for i in range(len(betas))]

return pd.Series(betas, index=names, dtype=float)

def extract_bayespecon_params(model_name, model, idata):

out = {}

beta_mean = idata.posterior["beta"].mean(("chain", "draw")).values

beta_names = (

model._beta_names()

if model_name in {"SLX", "SDM", "SDEM"}

else list(model._feature_names)

)

for name, val in zip(beta_names, beta_mean):

out[name] = float(val)

if model_name in {"SAR", "SDM"}:

out["rho"] = float(idata.posterior["rho"].mean())

if model_name in {"SEM", "SDEM"}:

out["lambda"] = float(idata.posterior["lam"].mean())

harmonized = {}

for k, v in out.items():

key = k.replace("W*", "W_")

if key.lower() == "intercept":

key = "CONSTANT"

harmonized[key] = v

return pd.Series(harmonized, dtype=float)

def bayes_effects_df(model):

eff = model.spatial_effects()

# spatial_effects() returns a DataFrame indexed by feature names

return pd.DataFrame(

{

"feature": eff.index.tolist(),

"direct_bayespecon": eff["direct"].values,

"indirect_bayespecon": eff["indirect"].values,

"total_bayespecon": eff["total"].values,

}

)

def spreg_effects_df(model_name, sp_model):

if model_name == "SEM":

# SEM has no spatial multiplier on the systematic component,

# so direct = coefficient, indirect = 0, total = coefficient.

# bayespecon excludes the intercept from effects, so we

# only compare non-constant covariates here.

sp_params = extract_spreg_params(sp_model)

features = [f for f in ["INC", "CRIME"] if f in sp_params.index]

return pd.DataFrame(

{

"feature": features,

"direct_spreg": [float(sp_params[f]) for f in features],

"indirect_spreg": [0.0 for _ in features],

"total_spreg": [float(sp_params[f]) for f in features],

}

)

output = sp_model.output.copy()

output["regime"] = "global"

def classify_var(var_name):

if var_name == "CONSTANT":

return "o"

if var_name in {"lambda", "W_HOVAL"}:

return "rho"

if var_name.startswith("W_"):

return "wx"

return "x"

output["var_type"] = output["var_names"].map(classify_var)

sp_model.output = output

vars_x = output.query("var_type == 'x'")

rho = (

float(np.squeeze(getattr(sp_model, "rho", 0.0)))

if hasattr(sp_model, "rho")

else 0.0

)

multipliers = spmultiplier(w, rho)

slx_lags = getattr(sp_model, "slx_lags", 0) or (

1 if model_name in {"SLX", "SDEM"} else 0

)

slx_vars = getattr(sp_model, "slx_vars", None)

if slx_vars is None:

slx_vars = "All"

total_spreg, direct_spreg, indirect_spreg = _sp_effects(

sp_model,

vars_x,

multipliers,

slx_lags=slx_lags,

slx_vars=slx_vars,

)

return pd.DataFrame(

{

"feature": vars_x["var_names"].tolist(),

"direct_spreg": np.asarray(direct_spreg).flatten(),

"indirect_spreg": np.asarray(indirect_spreg).flatten(),

"total_spreg": np.asarray(total_spreg).flatten(),

}

)

coef_rows = []

effect_tables = {}

for model_name in ["SLX", "SAR", "SEM", "SDM", "SDEM"]:

sp = extract_spreg_params(spreg_results[model_name])

by = extract_bayespecon_params(

model_name, bayes_models[model_name], bayes_idata[model_name]

)

common = sorted(set(sp.index).intersection(set(by.index)))

for param in common:

coef_rows.append(

{

"model": model_name,

"parameter": param,

"bayespecon_posterior_mean": by[param],

"spreg_estimate": sp[param],

"difference": by[param] - sp[param],

}

)

beff = bayes_effects_df(bayes_models[model_name]).copy()

seff = spreg_effects_df(model_name, spreg_results[model_name]).copy()

merged = beff.merge(seff, on="feature", how="inner")

if not merged.empty:

merged["direct_difference"] = (

merged["direct_bayespecon"] - merged["direct_spreg"]

)

merged["indirect_difference"] = (

merged["indirect_bayespecon"] - merged["indirect_spreg"]

)

merged["total_difference"] = merged["total_bayespecon"] - merged["total_spreg"]

merged.insert(0, "model", model_name)

effect_tables[model_name] = merged

comparison = (

pd.DataFrame(coef_rows).sort_values(["model", "parameter"]).reset_index(drop=True)

)

spatial_effects_comparison = pd.concat(

[tbl for tbl in effect_tables.values() if not tbl.empty],

ignore_index=True,

)

Coefficient comparison¶

display(comparison)

| model | parameter | bayespecon_posterior_mean | spreg_estimate | difference | |

|---|---|---|---|---|---|

| 0 | SAR | CONSTANT | 37.790694 | 55.672262 | -17.881568 |

| 1 | SAR | CRIME | -0.450299 | -0.519068 | 0.068769 |

| 2 | SAR | INC | 0.562276 | 0.695416 | -0.133140 |

| 3 | SAR | rho | 0.215835 | -0.232746 | 0.448581 |

| 4 | SDEM | CONSTANT | 28.491212 | 29.460538 | -0.969327 |

| 5 | SDEM | CRIME | -0.568961 | -0.575430 | 0.006469 |

| 6 | SDEM | INC | 0.885285 | 0.820920 | 0.064365 |

| 7 | SDEM | W_CRIME | 0.267135 | 0.300693 | -0.033559 |

| 8 | SDEM | W_INC | 0.545364 | 0.478086 | 0.067278 |

| 9 | SDEM | lambda | 0.440069 | 0.358330 | 0.081739 |

| 10 | SDM | CONSTANT | 18.315262 | 1.578137 | 16.737125 |

| 11 | SDM | CRIME | -0.604442 | -0.634137 | 0.029695 |

| 12 | SDM | INC | 0.805481 | 0.892362 | -0.086881 |

| 13 | SDM | W_CRIME | 0.460443 | 0.598950 | -0.138507 |

| 14 | SDM | W_INC | 0.065907 | -0.600606 | 0.666513 |

| 15 | SDM | rho | 0.329694 | 0.887712 | -0.558019 |

| 16 | SEM | CONSTANT | 47.980071 | 47.943711 | 0.036360 |

| 17 | SEM | CRIME | -0.564235 | -0.555717 | -0.008518 |

| 18 | SEM | INC | 0.734156 | 0.705981 | 0.028175 |

| 19 | SEM | lambda | 0.426726 | 0.372301 | 0.054426 |

| 20 | SLX | CONSTANT | 27.696533 | 27.727885 | -0.031351 |

| 21 | SLX | CRIME | -0.591790 | -0.590675 | -0.001114 |

| 22 | SLX | INC | 0.743739 | 0.742442 | 0.001297 |

| 23 | SLX | W_CRIME | 0.390632 | 0.390156 | 0.000476 |

| 24 | SLX | W_INC | 0.487975 | 0.486179 | 0.001795 |

Direct/Indirect/Total effects comparison¶

display(spatial_effects_comparison)

| model | feature | direct_bayespecon | indirect_bayespecon | total_bayespecon | direct_spreg | indirect_spreg | total_spreg | direct_difference | indirect_difference | total_difference | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| 0 | SLX | INC | 0.743739 | 0.487975 | 1.231714 | 0.742442 | 0.486179 | 1.228621 | 0.001297 | 0.001795 | 0.003093 |

| 1 | SLX | CRIME | -0.591790 | 0.390632 | -0.201157 | -0.590675 | 0.390156 | -0.200519 | -0.001114 | 0.000476 | -0.000638 |

| 2 | SAR | INC | 0.572928 | 0.156536 | 0.729463 | 0.695416 | -0.131296 | 0.564120 | -0.122488 | 0.287832 | 0.165344 |

| 3 | SAR | CRIME | -0.459952 | -0.137418 | -0.597370 | -0.519068 | 0.098001 | -0.421067 | 0.059116 | -0.235419 | -0.176303 |

| 4 | SEM | INC | 0.734156 | 0.000000 | 0.734156 | 0.705981 | 0.000000 | 0.705981 | 0.028175 | 0.000000 | 0.028175 |

| 5 | SEM | CRIME | -0.564235 | 0.000000 | -0.564235 | -0.555717 | 0.000000 | -0.555717 | -0.008518 | 0.000000 | -0.008518 |

| 6 | SDM | INC | 0.836697 | 0.480682 | 1.317378 | 0.892362 | 1.705923 | 2.598284 | -0.055665 | -1.225241 | -1.280906 |

| 7 | SDM | CRIME | -0.581898 | 0.362772 | -0.219126 | -0.634137 | 0.320776 | -0.313360 | 0.052238 | 0.041996 | 0.094234 |

| 8 | SDEM | INC | 0.885285 | 0.545364 | 1.430649 | 0.820920 | 0.478086 | 1.299005 | 0.064365 | 0.067278 | 0.131643 |

| 9 | SDEM | CRIME | -0.568961 | 0.267135 | -0.301826 | -0.575430 | 0.300693 | -0.274737 | 0.006469 | -0.033559 | -0.027089 |

bayespecon uses Bayesian inference while spreg reports frequentist point estimates, so exact equality is not expected. The coefficient and effects tables are intended to verify directional and numerical agreement under matched model formulas with HOVAL as the dependent variable.

For effects, this notebook now uses spreg’s own spatial-impact utilities: spreg.sputils.spmultiplier and spreg.sputils._sp_effects. SEM is the one exception, since it has no spatial multiplier on the systematic component and therefore uses direct = coefficient, indirect = 0, total = coefficient.

Note on intercept exclusion: bayespecon excludes the intercept from spatial effects for all model types, since the intercept has no meaningful spatial impact interpretation. This is consistent with spreg’s _sp_effects, which also excludes the constant (CONSTANT) from its impact calculations.

Example: Synthetic Data on a 30x30 Grid¶

This example demonstrates how to generate synthetic spatial data on a 30x30 regular grid, construct a spatial weights matrix, and fit a cross-sectional spatial regression model using the bayespecon package.

from bayespecon import SAR, dgp

# Set random seed for reproducibility

np.random.seed(42)

# True parameters

beta_true = np.array([1.0, -1.0])

rho_true = 0.5

sigma_true = 1.0

gdf = dgp.simulate_sar(

n=40,

beta=beta_true,

rho=rho_true,

sigma=sigma_true,

create_gdf=True,

contiguity="queen",

)

w = libpysal.graph.Graph.build_contiguity(gdf, rook=False).transform("r")

# Fit SAR model

model = SAR("y ~ X_1", W=w, data=gdf)

res = model.fit()

model.summary()

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [rho, beta, sigma]

Sampling 4 chains for 1_000 tune and 2_000 draw iterations (4_000 + 8_000 draws total) took 15 seconds.

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/rich/live.py:260: UserWarning: install "ipywidgets"

for Jupyter support

warnings.warn('install "ipywidgets" for Jupyter support')

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 1.022 | 0.061 | 0.910 | 1.142 | 0.001 | 0.001 | 3900.0 | 4216.0 | 1.0 |

| X_1 | -1.031 | 0.026 | -1.077 | -0.980 | 0.000 | 0.000 | 5903.0 | 4761.0 | 1.0 |

| rho | 0.492 | 0.028 | 0.438 | 0.546 | 0.000 | 0.000 | 3840.0 | 4046.0 | 1.0 |

| sigma | 1.044 | 0.019 | 1.009 | 1.078 | 0.000 | 0.000 | 5405.0 | 4902.0 | 1.0 |

model.spatial_effects()

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| X_1 | -1.075204 | -1.129604 | -1.021696 | 0.0 | -0.960471 | -1.196122 | -0.758757 | 0.0 | -2.035675 | -2.292644 | -1.809266 | 0.0 |

sim_spreg = spreg.GM_Lag(

gdf["y"],

gdf["X_1"],

w=w,

)

GM_Lag

print(sim_spreg.summary)

REGRESSION RESULTS

------------------

SUMMARY OF OUTPUT: SPATIAL TWO STAGE LEAST SQUARES

------------------------------------------------------------------------------------

Data set : unknown

Weights matrix : unknown

Dependent Variable : y Number of Observations: 1600

Mean dependent var : 1.9513 Number of Variables : 3

S.D. dependent var : 1.5702 Degrees of Freedom : 1597

Pseudo R-squared : 0.5518

Spatial Pseudo R-squared: 0.4798

------------------------------------------------------------------------------------

Variable Coefficient Std.Error z-Statistic Probability

------------------------------------------------------------------------------------

CONSTANT 1.18652 0.10618 11.17475 0.00000

X_1 -1.03619 0.02651 -39.08998 0.00000

W_y 0.40827 0.05254 7.77095 0.00000

------------------------------------------------------------------------------------

Instrumented: W_y

Instruments: W_X_1

DIAGNOSTICS FOR SPATIAL DEPENDENCE

TEST DF VALUE PROB

Anselin-Kelejian Test 1 2.010 0.1563

SPATIAL LAG MODEL IMPACTS

Impacts computed using the 'simple' method.

Variable Direct Indirect Total

X_1 -1.0362 -0.7149 -1.7511

================================ END OF REPORT =====================================