Bayesian Spatial Panel Models¶

This notebook demonstrates the panel-model classes in bayespecon:

Fixed effects / pooled panel models:

OLSPanelFE,SARPanelFE,SEMPanelFE,SDMPanelFE,SDEMPanelFERandom effects panel models:

OLSPanelRE,SARPanelRE,SEMPanelRE

It also demonstrates Bayesian model comparison via WAIC, LOO-CV, and Bayes factors.

Panel Model Equations¶

Let observations be stacked by time, then unit (panel_g convention).

Fixed-effects / pooled classes:

OLS panel: \(y_{it} = x_{it}'\beta + a_i + \tau_t + \epsilon_{it}\)

SAR panel: \(y_{it} = \rho (Wy)_{it} + x_{it}'\beta + a_i + \tau_t + \epsilon_{it}\)

SEM panel: \(y_{it} = x_{it}'\beta + a_i + \tau_t + u_{it},\; u_{it}=\lambda (Wu)_{it}+\epsilon_{it}\)

SDM panel: \(y_{it} = \rho (Wy)_{it} + x_{it}'\beta + (Wx)_{it}'\theta + a_i + \tau_t + \epsilon_{it}\)

SDEM panel: \(y_{it} = x_{it}'\beta + (Wx)_{it}'\theta + a_i + \tau_t + u_{it},\; u_{it}=\lambda (Wu)_{it}+\epsilon_{it}\)

Random-effects classes:

OLS random effects: \(y_{it} = x_{it}'\beta + \alpha_i + \epsilon_{it},\; \alpha_i \sim N(0, \sigma_\alpha^2)\)

SAR random effects: \(y_{it} = \rho (Wy)_{it} + x_{it}'\beta + \alpha_i + \epsilon_{it},\; \alpha_i \sim N(0, \sigma_\alpha^2)\)

SEM random effects: \(y_{it} = x_{it}'\beta + \alpha_i + u_{it},\; u_{it}=\lambda (Wu)_{it}+\epsilon_{it},\; \alpha_i \sim N(0, \sigma_\alpha^2)\)

The model argument selects the pooled or fixed-effects specification for the FE classes:

0pooled1unit FE2time FE3two-way FE

The RE classes are hierarchical models with unit-level random intercepts, so they internally use the pooled design and estimate sigma_alpha directly.

import arviz as az

import matplotlib.pyplot as plt

import numpy as np

import pandas as pd

from libpysal.graph import Graph

from bayespecon import (

OLSPanelFE,

OLSPanelRE,

SARPanelFE,

SARPanelRE,

SDEMPanelFE,

SDMPanelFE,

SEMPanelFE,

SEMPanelRE,

dgp,

)

az.style.use("arviz-white")

Build a Balanced Panel Dataset¶

For pedagogy, we generate synthetic panel data from the bayespecon.dgp module on a regular polygon grid and then assemble a formula-ready DataFrame.

# Generate base geometry via cross-sectional DGP, then simulate panel data via panel DGP.

rng = np.random.default_rng(2026)

xcols = ["poverty", "rev_rating", "num_spots", "crowded"]

ycol = "price_pp"

base_gdf = dgp.simulate_sar(n=8, seed=2026, create_gdf=True, geometry_type="polygon")

W = Graph.build_contiguity(base_gdf, rook=False).transform("r")

N = len(base_gdf)

T = 4

beta = np.array([1.5, -0.8, 0.6, 0.4, -0.5], dtype=float)

panel_sim = dgp.simulate_panel_sar_fe(

N=N,

T=T,

rho=0.35,

beta=beta,

sigma=1.0,

sigma_alpha=0.5,

rng=rng,

W=W,

)

panel = pd.DataFrame(

{

"unit": panel_sim["unit"],

"time": panel_sim["time"],

ycol: panel_sim["y"],

}

)

for j, name in enumerate(xcols, start=1):

panel[name] = panel_sim["X"][:, j]

Shared Helpers¶

def fit_panel_model(

model_cls, formula, data, W, model=3, draws=300, tune=300, chains=2, seed=42

):

"""Fit a panel model and return (model, summary, effects_df).

Uses minimal MCMC settings for pedagogical demonstration.

Increase draws/tune for real analyses.

"""

m = model_cls(

formula=formula,

data=data,

W=W,

unit_col="unit",

time_col="time",

model=model,

logdet_method="eigenvalue",

)

m.fit(

draws=draws,

tune=tune,

chains=chains,

target_accept=0.9,

random_seed=seed,

progressbar=False,

idata_kwargs={"log_likelihood": True},

)

summary = m.summary(round_to=3)

effects_df = pd.DataFrame(m.spatial_effects())

return m, summary, effects_df

def diagnostics_table(idata, var_names):

"""Show key MCMC diagnostics for the given parameters."""

cols = ["mean", "sd", "ess_bulk", "ess_tail", "r_hat"]

return az.summary(idata, var_names=var_names, round_to=3)[cols]

def show_trace(idata, var_names, title):

"""Plot trace plots for the given parameters."""

az.plot_trace(idata, var_names=var_names)

plt.suptitle(title, y=1.02)

plt.tight_layout()

plt.show()

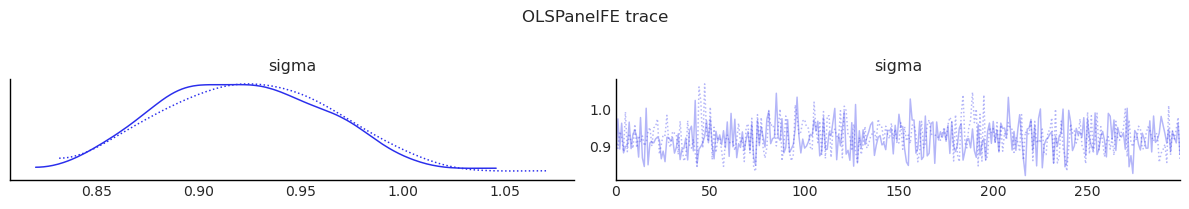

Fit: OLSPanelFE¶

formula = "price_pp ~ poverty + rev_rating + num_spots + crowded"

ols_panel, summary_ols, effects_ols = fit_panel_model(

OLSPanelFE, formula, panel, W, model=3

)

display(summary_ols)

display(effects_ols)

display(diagnostics_table(ols_panel.inference_data, ["beta", "sigma"]))

show_trace(ols_panel.inference_data, ["sigma"], "OLSPanelFE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -17938.848 | 926300.112 | -1696137.603 | 1732990.761 | 33595.169 | 33667.859 | 762.902 | 527.027 | 1.007 |

| poverty | -0.782 | 0.063 | -0.896 | -0.655 | 0.002 | 0.002 | 912.719 | 487.536 | 0.999 |

| rev_rating | 0.620 | 0.067 | 0.501 | 0.759 | 0.002 | 0.003 | 830.066 | 437.377 | 1.001 |

| num_spots | 0.392 | 0.079 | 0.249 | 0.531 | 0.003 | 0.003 | 817.795 | 482.493 | 0.997 |

| crowded | -0.484 | 0.065 | -0.610 | -0.367 | 0.003 | 0.002 | 679.134 | 445.403 | 1.000 |

| sigma | 0.924 | 0.043 | 0.853 | 1.005 | 0.001 | 0.002 | 944.424 | 469.213 | 0.999 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.781999 | -0.904705 | -0.654624 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.781999 | -0.904705 | -0.654624 | 0.0 |

| rev_rating | 0.619708 | 0.486484 | 0.755268 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.619708 | 0.486484 | 0.755268 | 0.0 |

| num_spots | 0.391925 | 0.245167 | 0.541430 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.391925 | 0.245167 | 0.541430 | 0.0 |

| crowded | -0.484415 | -0.609632 | -0.358080 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.484415 | -0.609632 | -0.358080 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| beta[Intercept] | -17938.848 | 926300.112 | 762.902 | 527.027 | 1.007 |

| beta[poverty] | -0.782 | 0.063 | 912.719 | 487.536 | 0.999 |

| beta[rev_rating] | 0.620 | 0.067 | 830.066 | 437.377 | 1.001 |

| beta[num_spots] | 0.392 | 0.079 | 817.795 | 482.493 | 0.997 |

| beta[crowded] | -0.484 | 0.065 | 679.134 | 445.403 | 1.000 |

| sigma | 0.924 | 0.043 | 944.424 | 469.213 | 0.999 |

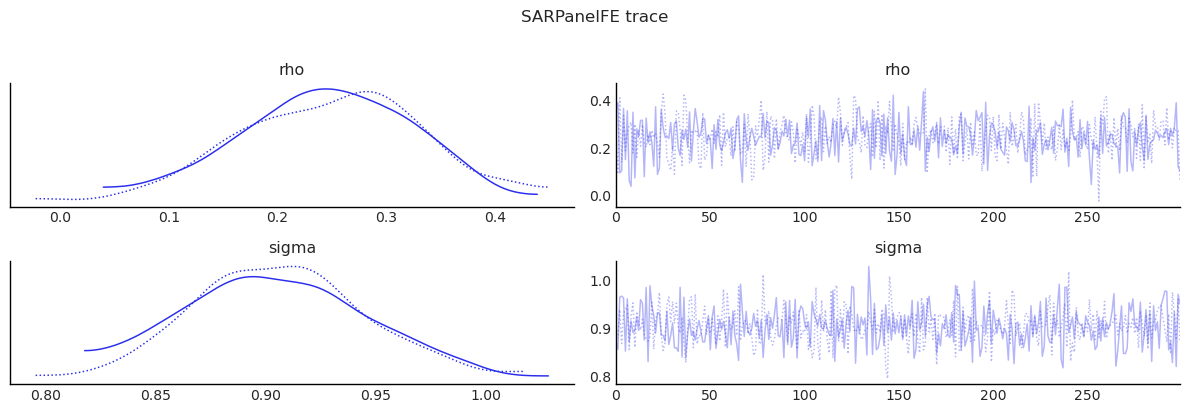

Fit: SARPanelFE¶

sar_panel, summary_sar, effects_sar = fit_panel_model(

SARPanelFE, formula, panel, W, model=3

)

display(summary_sar)

display(effects_sar)

display(diagnostics_table(sar_panel.inference_data, ["rho", "beta", "sigma"]))

show_trace(sar_panel.inference_data, ["rho", "sigma"], "SARPanelFE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [rho, beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -7523.979 | 992361.836 | -2097730.467 | 1758795.614 | 32039.615 | 45066.998 | 962.241 | 369.441 | 1.008 |

| poverty | -0.784 | 0.063 | -0.905 | -0.674 | 0.002 | 0.002 | 952.220 | 457.932 | 1.001 |

| rev_rating | 0.620 | 0.068 | 0.501 | 0.748 | 0.002 | 0.002 | 1098.712 | 529.286 | 0.998 |

| num_spots | 0.376 | 0.078 | 0.248 | 0.532 | 0.002 | 0.003 | 1086.159 | 522.337 | 0.997 |

| crowded | -0.497 | 0.059 | -0.599 | -0.384 | 0.002 | 0.002 | 948.160 | 539.865 | 1.000 |

| rho | 0.247 | 0.079 | 0.089 | 0.382 | 0.002 | 0.003 | 1109.673 | 386.768 | 1.000 |

| sigma | 0.905 | 0.039 | 0.830 | 0.977 | 0.001 | 0.002 | 1073.689 | 498.609 | 1.003 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.793988 | -0.918759 | -0.675208 | 0.0 | -0.259279 | -0.494637 | -0.073006 | 0.003333 | -1.053267 | -1.347461 | -0.804549 | 0.0 |

| rev_rating | 0.627874 | 0.492226 | 0.756036 | 0.0 | 0.205394 | 0.057567 | 0.396422 | 0.003333 | 0.833268 | 0.597306 | 1.122249 | 0.0 |

| num_spots | 0.380562 | 0.229926 | 0.536363 | 0.0 | 0.123320 | 0.031742 | 0.244520 | 0.003333 | 0.503882 | 0.290485 | 0.749359 | 0.0 |

| crowded | -0.503408 | -0.625725 | -0.395580 | 0.0 | -0.164919 | -0.319672 | -0.041843 | 0.003333 | -0.668327 | -0.894285 | -0.469148 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| rho | 0.247 | 0.079 | 1109.673 | 386.768 | 1.000 |

| beta[Intercept] | -7523.979 | 992361.836 | 962.241 | 369.441 | 1.008 |

| beta[poverty] | -0.784 | 0.063 | 952.220 | 457.932 | 1.001 |

| beta[rev_rating] | 0.620 | 0.068 | 1098.712 | 529.286 | 0.998 |

| beta[num_spots] | 0.376 | 0.078 | 1086.159 | 522.337 | 0.997 |

| beta[crowded] | -0.497 | 0.059 | 948.160 | 539.865 | 1.000 |

| sigma | 0.905 | 0.039 | 1073.689 | 498.609 | 1.003 |

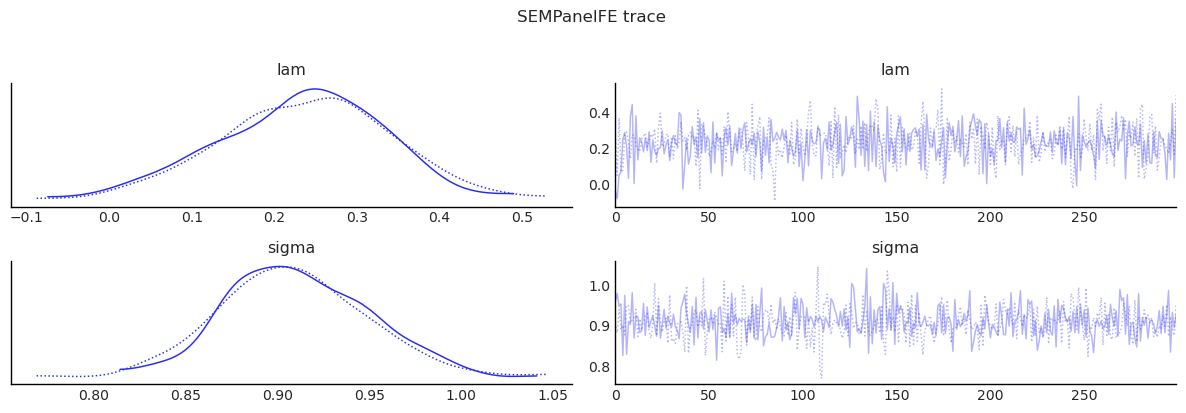

Fit: SEMPanelFE¶

sem_panel, summary_sem, effects_sem = fit_panel_model(

SEMPanelFE, formula, panel, W, model=3

)

display(summary_sem)

display(effects_sem)

display(diagnostics_table(sem_panel.inference_data, ["lam", "beta", "sigma"]))

show_trace(sem_panel.inference_data, ["lam", "sigma"], "SEMPanelFE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [lam, beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -14267.039 | 999416.638 | -1825959.455 | 1923500.648 | 34167.777 | 41279.721 | 849.889 | 473.838 | 1.000 |

| poverty | -0.774 | 0.057 | -0.876 | -0.677 | 0.002 | 0.002 | 1172.276 | 388.730 | 0.999 |

| rev_rating | 0.599 | 0.066 | 0.478 | 0.725 | 0.002 | 0.003 | 1030.047 | 412.009 | 1.003 |

| num_spots | 0.360 | 0.081 | 0.193 | 0.504 | 0.002 | 0.003 | 1435.977 | 362.773 | 1.000 |

| crowded | -0.495 | 0.062 | -0.616 | -0.391 | 0.002 | 0.002 | 998.956 | 481.011 | 1.000 |

| lam | 0.234 | 0.102 | 0.030 | 0.405 | 0.003 | 0.004 | 988.914 | 523.364 | 1.002 |

| sigma | 0.910 | 0.040 | 0.834 | 0.982 | 0.001 | 0.002 | 1044.759 | 433.540 | 1.000 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.774256 | -0.887344 | -0.675279 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.774256 | -0.887344 | -0.675279 | 0.0 |

| rev_rating | 0.599067 | 0.465079 | 0.723677 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.599067 | 0.465079 | 0.723677 | 0.0 |

| num_spots | 0.359547 | 0.195547 | 0.521156 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.359547 | 0.195547 | 0.521156 | 0.0 |

| crowded | -0.494852 | -0.609601 | -0.371725 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.494852 | -0.609601 | -0.371725 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| lam | 0.234 | 0.102 | 988.914 | 523.364 | 1.002 |

| beta[Intercept] | -14267.039 | 999416.638 | 849.889 | 473.838 | 1.000 |

| beta[poverty] | -0.774 | 0.057 | 1172.276 | 388.730 | 0.999 |

| beta[rev_rating] | 0.599 | 0.066 | 1030.047 | 412.009 | 1.003 |

| beta[num_spots] | 0.360 | 0.081 | 1435.977 | 362.773 | 1.000 |

| beta[crowded] | -0.495 | 0.062 | 998.956 | 481.011 | 1.000 |

| sigma | 0.910 | 0.040 | 1044.759 | 433.540 | 1.000 |

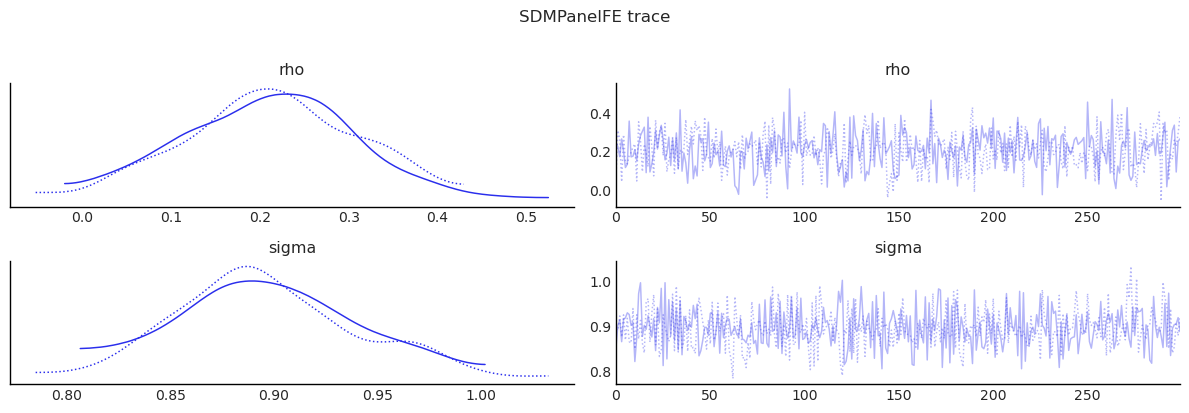

Fit: SDMPanelFE¶

sdm_panel, summary_sdm, effects_sdm = fit_panel_model(

SDMPanelFE, formula, panel, W, model=3

)

display(summary_sdm)

display(effects_sdm)

display(diagnostics_table(sdm_panel.inference_data, ["rho", "beta", "sigma"]))

show_trace(sdm_panel.inference_data, ["rho", "sigma"], "SDMPanelFE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [rho, beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -24752.183 | 982197.524 | -1654916.793 | 1804126.774 | 36778.036 | 34422.543 | 722.319 | 549.061 | 0.999 |

| poverty | -0.776 | 0.063 | -0.889 | -0.660 | 0.002 | 0.002 | 1023.652 | 582.222 | 1.001 |

| rev_rating | 0.645 | 0.071 | 0.505 | 0.773 | 0.002 | 0.003 | 813.981 | 396.745 | 1.002 |

| num_spots | 0.362 | 0.078 | 0.214 | 0.503 | 0.003 | 0.003 | 864.442 | 395.851 | 1.010 |

| crowded | -0.487 | 0.063 | -0.598 | -0.364 | 0.002 | 0.003 | 1037.770 | 409.853 | 1.000 |

| W*poverty | 0.047 | 0.168 | -0.291 | 0.318 | 0.005 | 0.006 | 958.936 | 542.077 | 0.998 |

| W*rev_rating | 0.265 | 0.195 | -0.064 | 0.676 | 0.006 | 0.007 | 1251.983 | 584.302 | 1.001 |

| W*num_spots | 0.425 | 0.182 | 0.065 | 0.738 | 0.007 | 0.006 | 736.548 | 501.047 | 1.000 |

| W*crowded | 0.216 | 0.181 | -0.114 | 0.532 | 0.006 | 0.007 | 817.109 | 452.733 | 1.011 |

| rho | 0.210 | 0.097 | 0.026 | 0.382 | 0.004 | 0.003 | 701.806 | 528.564 | 0.999 |

| sigma | 0.896 | 0.043 | 0.825 | 0.983 | 0.001 | 0.002 | 946.084 | 425.984 | 1.003 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.780322 | -0.900699 | -0.657682 | 0.0 | -0.143807 | -0.516414 | 0.199634 | 0.413333 | -0.924129 | -1.356625 | -0.506935 | 0.00 |

| rev_rating | 0.659615 | 0.510719 | 0.809888 | 0.0 | 0.500142 | 0.030851 | 1.013816 | 0.036667 | 1.159758 | 0.630355 | 1.724315 | 0.00 |

| num_spots | 0.380866 | 0.227593 | 0.535018 | 0.0 | 0.623865 | 0.220483 | 1.079395 | 0.000000 | 1.004731 | 0.551044 | 1.468786 | 0.00 |

| crowded | -0.483225 | -0.608166 | -0.354449 | 0.0 | 0.139372 | -0.281942 | 0.585426 | 0.533333 | -0.343852 | -0.817538 | 0.126599 | 0.17 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| rho | 0.210 | 0.097 | 701.806 | 528.564 | 0.999 |

| beta[Intercept] | -24752.183 | 982197.524 | 722.319 | 549.061 | 0.999 |

| beta[poverty] | -0.776 | 0.063 | 1023.652 | 582.222 | 1.001 |

| beta[rev_rating] | 0.645 | 0.071 | 813.981 | 396.745 | 1.002 |

| beta[num_spots] | 0.362 | 0.078 | 864.442 | 395.851 | 1.010 |

| beta[crowded] | -0.487 | 0.063 | 1037.770 | 409.853 | 1.000 |

| beta[W*poverty] | 0.047 | 0.168 | 958.936 | 542.077 | 0.998 |

| beta[W*rev_rating] | 0.265 | 0.195 | 1251.983 | 584.302 | 1.001 |

| beta[W*num_spots] | 0.425 | 0.182 | 736.548 | 501.047 | 1.000 |

| beta[W*crowded] | 0.216 | 0.181 | 817.109 | 452.733 | 1.011 |

| sigma | 0.896 | 0.043 | 946.084 | 425.984 | 1.003 |

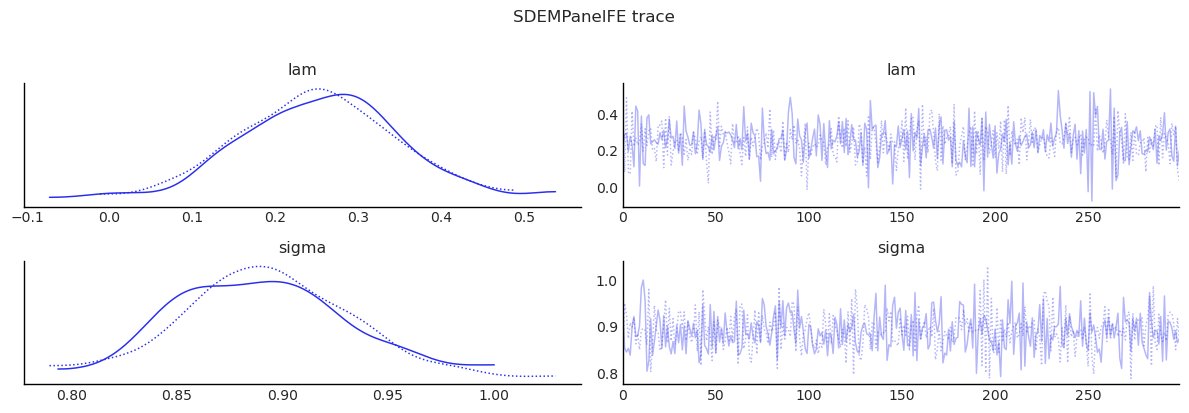

Fit: SDEMPanelFE¶

sdem_panel, summary_sdem, effects_sdem = fit_panel_model(

SDEMPanelFE, formula, panel, W, model=3

)

display(summary_sdem)

display(effects_sdem)

display(diagnostics_table(sdem_panel.inference_data, ["lam", "beta", "sigma"]))

show_trace(sdem_panel.inference_data, ["lam", "sigma"], "SDEMPanelFE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [lam, beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -62308.781 | 994398.703 | -1888657.675 | 1808447.433 | 32961.272 | 35730.652 | 913.602 | 488.787 | 0.999 |

| poverty | -0.782 | 0.066 | -0.901 | -0.668 | 0.002 | 0.002 | 1120.080 | 612.915 | 1.006 |

| rev_rating | 0.658 | 0.074 | 0.517 | 0.787 | 0.003 | 0.002 | 727.012 | 514.565 | 1.000 |

| num_spots | 0.385 | 0.076 | 0.240 | 0.530 | 0.003 | 0.004 | 843.411 | 386.382 | 1.001 |

| crowded | -0.488 | 0.069 | -0.617 | -0.357 | 0.002 | 0.003 | 785.644 | 466.825 | 1.001 |

| W*poverty | -0.153 | 0.159 | -0.443 | 0.140 | 0.005 | 0.006 | 1084.319 | 527.507 | 0.999 |

| W*rev_rating | 0.439 | 0.212 | 0.039 | 0.825 | 0.009 | 0.008 | 575.717 | 538.630 | 1.001 |

| W*num_spots | 0.604 | 0.221 | 0.206 | 1.006 | 0.008 | 0.008 | 806.898 | 488.761 | 0.999 |

| W*crowded | 0.113 | 0.189 | -0.276 | 0.443 | 0.006 | 0.006 | 849.394 | 481.011 | 1.004 |

| lam | 0.253 | 0.097 | 0.084 | 0.449 | 0.003 | 0.005 | 1325.447 | 437.607 | 1.002 |

| sigma | 0.891 | 0.040 | 0.816 | 0.966 | 0.001 | 0.002 | 1022.757 | 531.812 | 0.999 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.782128 | -0.902605 | -0.656286 | 0.0 | -0.153335 | -0.486446 | 0.127443 | 0.343333 | -0.935463 | -1.312488 | -0.596659 | 0.00 |

| rev_rating | 0.657944 | 0.525386 | 0.808370 | 0.0 | 0.438747 | 0.044381 | 0.877121 | 0.026667 | 1.096691 | 0.626058 | 1.621940 | 0.00 |

| num_spots | 0.384577 | 0.232888 | 0.537989 | 0.0 | 0.603960 | 0.189739 | 1.010107 | 0.006667 | 0.988537 | 0.514463 | 1.459270 | 0.00 |

| crowded | -0.488016 | -0.622812 | -0.351537 | 0.0 | 0.113423 | -0.273240 | 0.478564 | 0.530000 | -0.374593 | -0.829240 | 0.091980 | 0.09 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| lam | 0.253 | 0.097 | 1325.447 | 437.607 | 1.002 |

| beta[Intercept] | -62308.781 | 994398.703 | 913.602 | 488.787 | 0.999 |

| beta[poverty] | -0.782 | 0.066 | 1120.080 | 612.915 | 1.006 |

| beta[rev_rating] | 0.658 | 0.074 | 727.012 | 514.565 | 1.000 |

| beta[num_spots] | 0.385 | 0.076 | 843.411 | 386.382 | 1.001 |

| beta[crowded] | -0.488 | 0.069 | 785.644 | 466.825 | 1.001 |

| beta[W*poverty] | -0.153 | 0.159 | 1084.319 | 527.507 | 0.999 |

| beta[W*rev_rating] | 0.439 | 0.212 | 575.717 | 538.630 | 1.001 |

| beta[W*num_spots] | 0.604 | 0.221 | 806.898 | 488.761 | 0.999 |

| beta[W*crowded] | 0.113 | 0.189 | 849.394 | 481.011 | 1.004 |

| sigma | 0.891 | 0.040 | 1022.757 | 531.812 | 0.999 |

MCMC adequacy for the spatial parameter¶

Panel SAR/SDEM models inherit the slow-mixing behaviour of \(\rho\)

(or \(\lambda\)) documented by Wolf, Anselin & Arribas-Bel (2018)

[Wolf et al., 2018]. The

spatial_mcmc_diagnostic helper checks ESS, sampler yield, \(\hat{R}\),

and HPDI stability for the spatial scalar.

from bayespecon import spatial_mcmc_diagnostic

spatial_mcmc_diagnostic(sdm_panel, emit_warnings=False).to_frame()

| ess_bulk | ess_tail | r_hat | mcse_mean | yield_pct | hpdi_drift_pct | adequate | |

|---|---|---|---|---|---|---|---|

| parameter | |||||||

| rho | 701.806415 | 528.564363 | 0.998564 | 0.003705 | 116.967736 | 0.452301 | False |

Random-Effects Panel Models¶

The random-effects models replace deterministic unit fixed effects with latent unit intercepts \(\alpha_i \sim N(0, \sigma_\alpha^2)\). That lets the notebook estimate the variance of unit heterogeneity directly and compare FE and RE behavior on the same synthetic balanced panel.

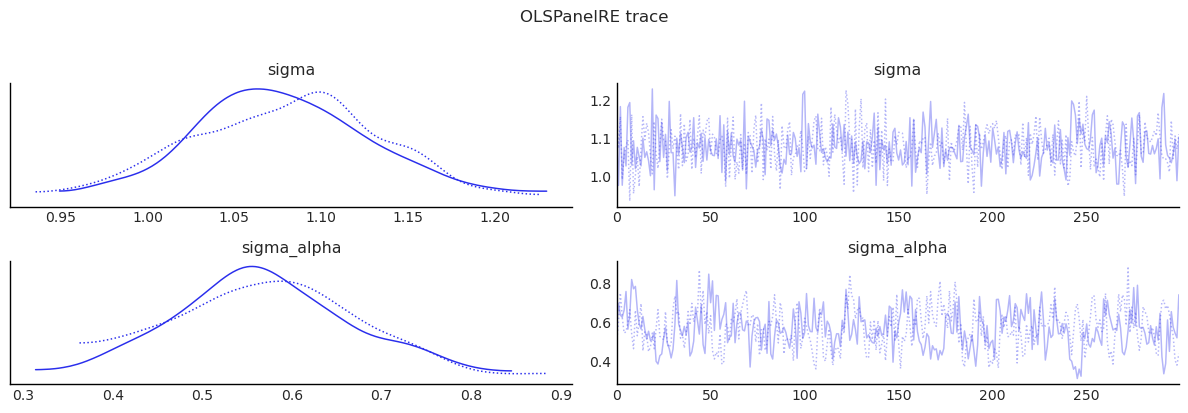

Fit: OLSPanelRE¶

ols_panel_re, summary_ols_re, effects_ols_re = fit_panel_model(

OLSPanelRE, formula, panel, W, model=0

)

display(summary_ols_re)

display(effects_ols_re)

display(

diagnostics_table(ols_panel_re.inference_data, ["beta", "sigma", "sigma_alpha"])

)

show_trace(ols_panel_re.inference_data, ["sigma", "sigma_alpha"], "OLSPanelRE trace")

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [beta, sigma, sigma_alpha, alpha]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

The effective sample size per chain is smaller than 100 for some parameters. A higher number is needed for reliable rhat and ess computation. See https://arxiv.org/abs/1903.08008 for details

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 2.316 | 0.098 | 2.139 | 2.495 | 0.004 | 0.003 | 527.418 | 426.632 | 1.007 |

| poverty | -0.824 | 0.070 | -0.946 | -0.682 | 0.002 | 0.003 | 928.816 | 395.270 | 1.003 |

| rev_rating | 0.640 | 0.079 | 0.479 | 0.773 | 0.002 | 0.004 | 1550.899 | 348.810 | 1.000 |

| num_spots | 0.422 | 0.084 | 0.277 | 0.598 | 0.003 | 0.004 | 887.929 | 405.400 | 1.003 |

| crowded | -0.467 | 0.068 | -0.583 | -0.337 | 0.002 | 0.002 | 1092.684 | 528.638 | 1.002 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| alpha[61] | -0.412 | 0.413 | -1.166 | 0.330 | 0.010 | 0.016 | 1666.891 | 553.033 | 1.000 |

| alpha[62] | -0.474 | 0.399 | -1.182 | 0.308 | 0.013 | 0.017 | 937.485 | 421.681 | 1.007 |

| alpha[63] | -0.083 | 0.378 | -0.805 | 0.566 | 0.011 | 0.015 | 1287.055 | 514.661 | 1.009 |

| sigma | 1.079 | 0.054 | 0.972 | 1.169 | 0.002 | 0.002 | 721.117 | 484.639 | 1.003 |

| sigma_alpha | 0.571 | 0.101 | 0.387 | 0.757 | 0.007 | 0.003 | 183.612 | 272.620 | 1.001 |

71 rows × 9 columns

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.824451 | -0.960888 | -0.686160 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.824451 | -0.960888 | -0.686160 | 0.0 |

| rev_rating | 0.640127 | 0.488938 | 0.797677 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.640127 | 0.488938 | 0.797677 | 0.0 |

| num_spots | 0.422231 | 0.255925 | 0.592860 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.422231 | 0.255925 | 0.592860 | 0.0 |

| crowded | -0.467156 | -0.587586 | -0.328555 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.467156 | -0.587586 | -0.328555 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| beta[Intercept] | 2.316 | 0.098 | 527.418 | 426.632 | 1.007 |

| beta[poverty] | -0.824 | 0.070 | 928.816 | 395.270 | 1.003 |

| beta[rev_rating] | 0.640 | 0.079 | 1550.899 | 348.810 | 1.000 |

| beta[num_spots] | 0.422 | 0.084 | 887.929 | 405.400 | 1.003 |

| beta[crowded] | -0.467 | 0.068 | 1092.684 | 528.638 | 1.002 |

| sigma | 1.079 | 0.054 | 721.117 | 484.639 | 1.003 |

| sigma_alpha | 0.571 | 0.101 | 183.612 | 272.620 | 1.001 |

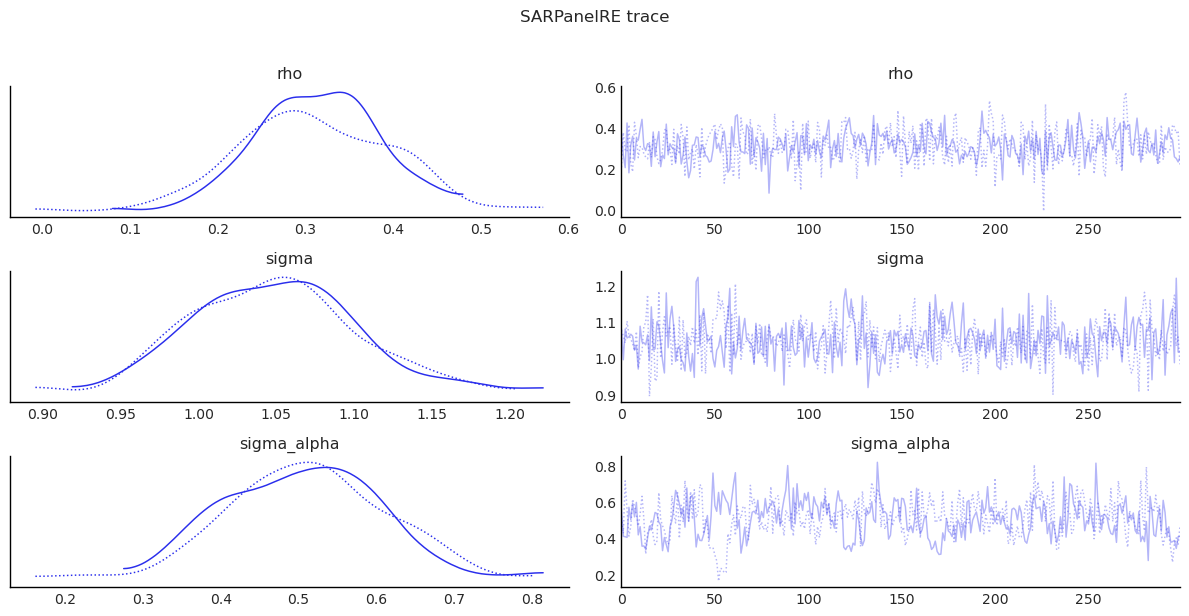

Fit: SARPanelRE¶

sar_panel_re, summary_sar_re, effects_sar_re = fit_panel_model(

SARPanelRE, formula, panel, W, model=0

)

display(summary_sar_re)

display(effects_sar_re)

display(

diagnostics_table(

sar_panel_re.inference_data, ["rho", "beta", "sigma", "sigma_alpha"]

)

)

show_trace(

sar_panel_re.inference_data, ["rho", "sigma", "sigma_alpha"], "SARPanelRE trace"

)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [rho, beta, sigma, sigma_alpha, alpha]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 6 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

The effective sample size per chain is smaller than 100 for some parameters. A higher number is needed for reliable rhat and ess computation. See https://arxiv.org/abs/1903.08008 for details

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 1.561 | 0.201 | 1.204 | 1.925 | 0.009 | 0.007 | 549.789 | 423.633 | 1.010 |

| poverty | -0.826 | 0.070 | -0.957 | -0.697 | 0.002 | 0.003 | 1036.648 | 407.204 | 1.000 |

| rev_rating | 0.640 | 0.070 | 0.491 | 0.750 | 0.002 | 0.003 | 1354.377 | 437.158 | 1.004 |

| num_spots | 0.389 | 0.083 | 0.240 | 0.539 | 0.003 | 0.003 | 921.499 | 415.933 | 1.005 |

| crowded | -0.477 | 0.062 | -0.602 | -0.357 | 0.002 | 0.002 | 894.167 | 486.736 | 1.000 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| alpha[62] | -0.460 | 0.375 | -1.218 | 0.172 | 0.014 | 0.014 | 702.846 | 437.556 | 1.004 |

| alpha[63] | -0.052 | 0.344 | -0.711 | 0.580 | 0.013 | 0.014 | 736.514 | 478.444 | 1.007 |

| rho | 0.312 | 0.077 | 0.184 | 0.461 | 0.003 | 0.003 | 644.918 | 514.062 | 1.011 |

| sigma | 1.050 | 0.055 | 0.956 | 1.159 | 0.003 | 0.002 | 459.750 | 586.529 | 1.005 |

| sigma_alpha | 0.508 | 0.102 | 0.323 | 0.683 | 0.008 | 0.005 | 151.937 | 139.059 | 1.007 |

72 rows × 9 columns

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.842028 | -0.972979 | -0.693696 | 0.0 | -0.373316 | -0.654718 | -0.157823 | 0.003333 | -1.215344 | -1.582737 | -0.935804 | 0.0 |

| rev_rating | 0.652656 | 0.510232 | 0.802243 | 0.0 | 0.290110 | 0.114401 | 0.504586 | 0.003333 | 0.942765 | 0.680550 | 1.262170 | 0.0 |

| num_spots | 0.396211 | 0.236069 | 0.553332 | 0.0 | 0.174922 | 0.068301 | 0.327676 | 0.003333 | 0.571133 | 0.316314 | 0.834967 | 0.0 |

| crowded | -0.486311 | -0.611548 | -0.354924 | 0.0 | -0.216691 | -0.409040 | -0.087249 | 0.003333 | -0.703003 | -0.978464 | -0.468569 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| rho | 0.312 | 0.077 | 644.918 | 514.062 | 1.011 |

| beta[Intercept] | 1.561 | 0.201 | 549.789 | 423.633 | 1.010 |

| beta[poverty] | -0.826 | 0.070 | 1036.648 | 407.204 | 1.000 |

| beta[rev_rating] | 0.640 | 0.070 | 1354.377 | 437.158 | 1.004 |

| beta[num_spots] | 0.389 | 0.083 | 921.499 | 415.933 | 1.005 |

| beta[crowded] | -0.477 | 0.062 | 894.167 | 486.736 | 1.000 |

| sigma | 1.050 | 0.055 | 459.750 | 586.529 | 1.005 |

| sigma_alpha | 0.508 | 0.102 | 151.937 | 139.059 | 1.007 |

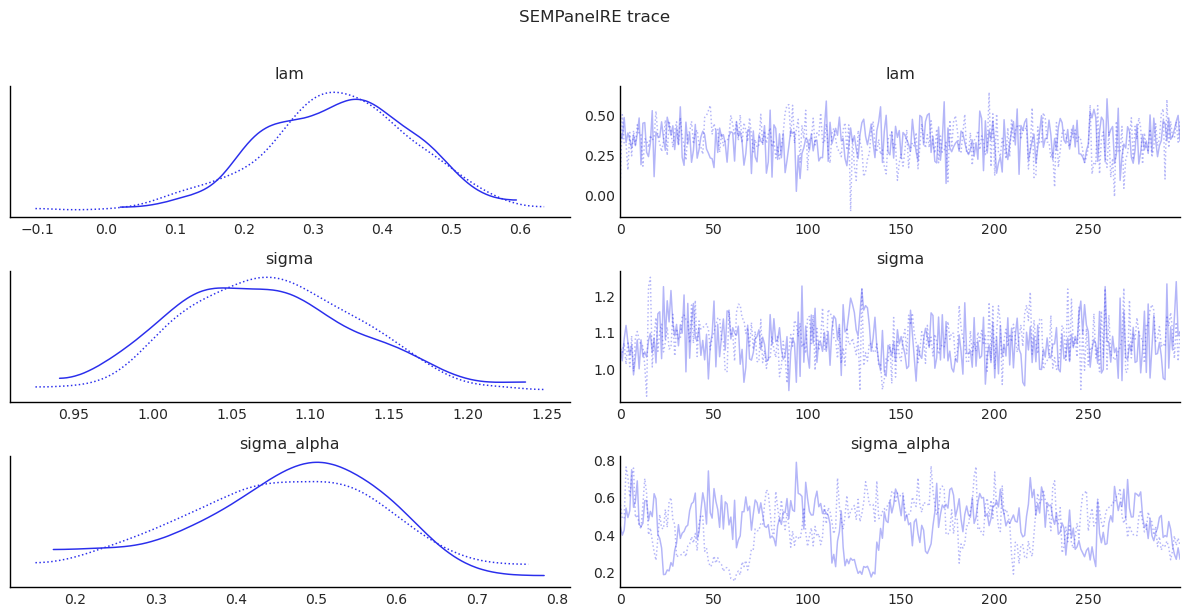

Fit: SEMPanelRE¶

sem_panel_re, summary_sem_re, effects_sem_re = fit_panel_model(

SEMPanelRE, formula, panel, W, model=0

)

display(summary_sem_re)

display(effects_sem_re)

display(

diagnostics_table(

sem_panel_re.inference_data, ["lam", "beta", "sigma", "sigma_alpha"]

)

)

show_trace(

sem_panel_re.inference_data, ["lam", "sigma", "sigma_alpha"], "SEMPanelRE trace"

)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [lam, beta, sigma, sigma_alpha, alpha]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 7 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

The effective sample size per chain is smaller than 100 for some parameters. A higher number is needed for reliable rhat and ess computation. See https://arxiv.org/abs/1903.08008 for details

/tmp/ipykernel_6941/2596509600.py:42: UserWarning: The figure layout has changed to tight

plt.tight_layout()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 2.318 | 0.118 | 2.074 | 2.520 | 0.005 | 0.004 | 524.478 | 475.391 | 1.003 |

| poverty | -0.813 | 0.070 | -0.936 | -0.682 | 0.002 | 0.003 | 957.036 | 549.479 | 1.002 |

| rev_rating | 0.614 | 0.069 | 0.485 | 0.736 | 0.003 | 0.003 | 757.836 | 375.984 | 1.008 |

| num_spots | 0.384 | 0.084 | 0.232 | 0.543 | 0.003 | 0.003 | 803.413 | 561.541 | 1.002 |

| crowded | -0.474 | 0.066 | -0.593 | -0.360 | 0.002 | 0.003 | 798.059 | 442.077 | 1.012 |

| ... | ... | ... | ... | ... | ... | ... | ... | ... | ... |

| alpha[62] | -0.373 | 0.358 | -1.087 | 0.224 | 0.020 | 0.015 | 327.925 | 440.092 | 0.997 |

| alpha[63] | -0.036 | 0.347 | -0.644 | 0.646 | 0.009 | 0.019 | 1338.586 | 364.995 | 1.000 |

| lam | 0.335 | 0.109 | 0.126 | 0.533 | 0.006 | 0.004 | 332.546 | 418.547 | 1.002 |

| sigma | 1.075 | 0.059 | 0.972 | 1.186 | 0.004 | 0.002 | 236.053 | 470.594 | 1.009 |

| sigma_alpha | 0.460 | 0.125 | 0.202 | 0.661 | 0.016 | 0.009 | 63.397 | 69.629 | 1.012 |

72 rows × 9 columns

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.813409 | -0.939997 | -0.671319 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.813409 | -0.939997 | -0.671319 | 0.0 |

| rev_rating | 0.614326 | 0.476943 | 0.735283 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.614326 | 0.476943 | 0.735283 | 0.0 |

| num_spots | 0.384335 | 0.224794 | 0.551231 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | 0.384335 | 0.224794 | 0.551231 | 0.0 |

| crowded | -0.474407 | -0.597197 | -0.353944 | 0.0 | 0.0 | 0.0 | 0.0 | 0.0 | -0.474407 | -0.597197 | -0.353944 | 0.0 |

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| lam | 0.335 | 0.109 | 332.546 | 418.547 | 1.002 |

| beta[Intercept] | 2.318 | 0.118 | 524.478 | 475.391 | 1.003 |

| beta[poverty] | -0.813 | 0.070 | 957.036 | 549.479 | 1.002 |

| beta[rev_rating] | 0.614 | 0.069 | 757.836 | 375.984 | 1.008 |

| beta[num_spots] | 0.384 | 0.084 | 803.413 | 561.541 | 1.002 |

| beta[crowded] | -0.474 | 0.066 | 798.059 | 442.077 | 1.012 |

| sigma | 1.075 | 0.059 | 236.053 | 470.594 | 1.009 |

| sigma_alpha | 0.460 | 0.125 | 63.397 | 69.629 | 1.012 |

Model Comparison (WAIC and LOO) Across FE and RE Models¶

# Collect all fitted panel models for comparison

panel_models = {

"OLSPanelFE": ols_panel,

"SARPanelFE": sar_panel,

"SEMPanelFE": sem_panel,

"SDMPanelFE": sdm_panel,

"SDEMPanelFE": sdem_panel,

"OLSPanelRE": ols_panel_re,

"SARPanelRE": sar_panel_re,

"SEMPanelRE": sem_panel_re,

}

idata_dict = {name: m.inference_data for name, m in panel_models.items()}

# WAIC and LOO comparison

for ic in ("waic", "loo"):

try:

cmp = az.compare(idata_dict, ic=ic, method="BB-pseudo-BMA")

print(f"\n{ic.upper()} comparison")

display(cmp)

except Exception as e:

print(f"{ic.upper()} comparison not available: {type(e).__name__}: {e}")

# Per-model IC table

rows = []

for name, model in panel_models.items():

idata = model.inference_data

row = {"model": name}

try:

waic_res = az.waic(idata)

row["elpd_waic"] = float(waic_res.elpd_waic)

row["p_waic"] = float(waic_res.p_waic)

except Exception:

row["elpd_waic"] = np.nan

row["p_waic"] = np.nan

try:

loo_res = az.loo(idata)

row["elpd_loo"] = float(loo_res.elpd_loo)

row["p_loo"] = float(loo_res.p_loo)

except Exception:

row["elpd_loo"] = np.nan

row["p_loo"] = np.nan

rows.append(row)

pd.DataFrame(rows).sort_values("model").reset_index(drop=True)

WAIC comparison

LOO comparison

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.64 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

| rank | elpd_waic | p_waic | elpd_diff | weight | se | dse | warning | scale | |

|---|---|---|---|---|---|---|---|---|---|

| SDEMPanelFE | 0 | -340.031518 | 9.524364 | 0.000000 | 4.109494e-01 | 11.515000 | 0.000000 | True | log |

| SDMPanelFE | 1 | -340.576966 | 9.392896 | 0.545448 | 2.330835e-01 | 11.501346 | 0.516308 | True | log |

| SARPanelFE | 2 | -341.411418 | 5.626092 | 1.379900 | 2.262870e-01 | 11.148008 | 3.014953 | True | log |

| SEMPanelFE | 3 | -343.307107 | 5.591902 | 3.275589 | 9.465789e-02 | 11.303820 | 3.709868 | True | log |

| OLSPanelFE | 4 | -345.291020 | 5.114273 | 5.259501 | 3.502214e-02 | 11.332006 | 4.249229 | False | log |

| SARPanelRE | 5 | -397.163954 | 35.409716 | 57.132435 | 6.632309e-18 | 11.400511 | 7.434245 | True | log |

| SEMPanelRE | 6 | -401.368447 | 32.244013 | 61.336928 | 1.353697e-19 | 11.374343 | 8.162690 | True | log |

| OLSPanelRE | 7 | -401.877837 | 36.318192 | 61.846318 | 1.662165e-19 | 11.480481 | 7.652122 | True | log |

| rank | elpd_loo | p_loo | elpd_diff | weight | se | dse | warning | scale | |

|---|---|---|---|---|---|---|---|---|---|

| SDEMPanelFE | 0 | -340.112759 | 9.605605 | 0.000000 | 4.101582e-01 | 11.784554 | 0.000000 | False | log |

| SDMPanelFE | 1 | -340.641794 | 9.457724 | 0.529035 | 2.294898e-01 | 11.779591 | 0.515685 | False | log |

| SARPanelFE | 2 | -341.460783 | 5.675457 | 1.348024 | 2.353857e-01 | 11.565877 | 3.016305 | False | log |

| SEMPanelFE | 3 | -343.352414 | 5.637209 | 3.239655 | 9.128180e-02 | 11.859151 | 3.709993 | False | log |

| OLSPanelFE | 4 | -345.323358 | 5.146611 | 5.210599 | 3.368454e-02 | 11.942946 | 4.249712 | False | log |

| SARPanelRE | 5 | -398.001534 | 36.247296 | 57.888775 | 9.013760e-18 | 11.718102 | 7.457544 | True | log |

| SEMPanelRE | 6 | -401.939558 | 32.815124 | 61.826798 | 2.359110e-20 | 11.758511 | 8.137595 | True | log |

| OLSPanelRE | 7 | -402.597600 | 37.037956 | 62.484841 | 1.841630e-21 | 11.980842 | 7.659159 | True | log |

| model | elpd_waic | p_waic | elpd_loo | p_loo | |

|---|---|---|---|---|---|

| 0 | OLSPanelFE | -345.291020 | 5.114273 | -345.323358 | 5.146611 |

| 1 | OLSPanelRE | -401.877837 | 36.318192 | -402.597600 | 37.037956 |

| 2 | SARPanelFE | -341.411418 | 5.626092 | -341.460783 | 5.675457 |

| 3 | SARPanelRE | -397.163954 | 35.409716 | -398.001534 | 36.247296 |

| 4 | SDEMPanelFE | -340.031518 | 9.524364 | -340.112759 | 9.605605 |

| 5 | SDMPanelFE | -340.576966 | 9.392896 | -340.641794 | 9.457724 |

| 6 | SEMPanelFE | -343.307107 | 5.591902 | -343.352414 | 5.637209 |

| 7 | SEMPanelRE | -401.368447 | 32.244013 | -401.939558 | 32.815124 |

Notes¶

This notebook uses modest draw counts for speed. For real analyses, increase

drawsandtune.If you see divergences or tree-depth warnings, increase

target_accept(for example 0.95-0.99).For production inference, validate sensitivity to FE specification (

model=0/1/2/3) and priors.

Bayesian Panel LM Diagnostics¶

The bayespecon.diagnostics module provides a full suite of Bayesian Lagrange Multiplier (LM) tests for panel models, following the framework of Dogan et al. (2021) with panel-specific formulas from Anselin (2008), Elhorst (2014), and Koley & Bera (2024).

These tests help answer the question: which spatial panel specification is most appropriate? They test for omitted spatial lag (\(\rho\)), spatial error (\(\lambda\)), and spatially lagged covariates (\(\gamma\)), both individually and jointly, with robust variants that account for the presence of other spatial effects.

The decision tree mirrors the classical Anselin (1988) / Koley-Bera (2024) approach, but uses the full posterior distribution rather than point estimates — yielding Bayesian p-values and credible intervals for each LM statistic.

from bayespecon.diagnostics.lmtests import (

bayesian_panel_lm_error_test,

bayesian_panel_lm_lag_test,

bayesian_panel_lm_sdm_joint_test,

bayesian_panel_lm_slx_error_joint_test,

bayesian_panel_lm_wx_test,

bayesian_panel_robust_lm_error_sdem_test,

bayesian_panel_robust_lm_error_test,

bayesian_panel_robust_lm_lag_sdm_test,

bayesian_panel_robust_lm_lag_test,

bayesian_panel_robust_lm_wx_test,

)

Standard Panel LM Tests (from OLS null)¶

The four standard panel LM tests use the OLS panel model as the null. They test for spatial lag, spatial error, and joint SDM/SDEM alternatives.

# Standard panel LM tests (from OLS null)

panel_standard = pd.DataFrame(

[

bayesian_panel_lm_lag_test(ols_panel).to_series(),

bayesian_panel_lm_error_test(ols_panel).to_series(),

bayesian_panel_lm_sdm_joint_test(ols_panel).to_series(),

bayesian_panel_lm_slx_error_joint_test(ols_panel).to_series(),

],

index=["LM-Lag", "LM-Error", "LM-SDM Joint", "LM-SLX-Error Joint"],

)

panel_standard

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:340: RuntimeWarning: X'X (panel LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

ZtZ_inv = _safe_inv(Z.T @ Z, "X'X (panel LM-lag)")

| lm_samples | mean | median | credible_interval | bayes_pvalue | test_type | df | n_draws | N | T | k_wx | |

|---|---|---|---|---|---|---|---|---|---|---|---|

| LM-Lag | [8.849705325258801, 9.698962158359063, 9.30729... | 9.138062 | 9.146584 | (7.624403692259989, 10.580994490594286) | 0.002503 | bayesian_panel_lm_lag | 1 | 600 | 64 | 4 | NaN |

| LM-Error | [4.444874640106857, 6.70484581272833, 5.316058... | 5.184203 | 5.113080 | (3.1019333231465978, 7.740363607083697) | 0.022793 | bayesian_panel_lm_error | 1 | 600 | 64 | 4 | NaN |

| LM-SDM Joint | [18.919378904981492, 19.85365968123855, 18.852... | 19.106073 | 19.048788 | (16.890953272562125, 21.771078089045034) | 0.001837 | bayesian_panel_lm_sdm_joint | 5 | 600 | 64 | 4 | 4.0 |

| LM-SLX-Error Joint | [18.538462451042633, 20.71280141260612, 18.860... | 19.072325 | 19.013449 | (15.954804896109492, 22.231454083674386) | 0.001863 | bayesian_panel_lm_slx_error_joint | 5 | 600 | 64 | 4 | 4.0 |

Robust Panel LM Tests (from OLS null)¶

The robust variants (Elhorst, 2014) test one spatial effect while accounting for the possible local presence of the other. For example, the robust LM-Lag tests for \(\rho\) robust to \(\lambda\), and vice versa.

# Robust panel LM tests (from OLS null)

panel_robust = pd.DataFrame(

[

bayesian_panel_robust_lm_lag_test(ols_panel).to_series(),

bayesian_panel_robust_lm_error_test(ols_panel).to_series(),

],

index=["Robust LM-Lag", "Robust LM-Error"],

)

panel_robust

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:494: RuntimeWarning: X'X (panel robust LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-lag)")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:586: RuntimeWarning: X'X (panel robust LM-error) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-error)")

| lm_samples | mean | median | credible_interval | bayes_pvalue | test_type | df | n_draws | N | T | |

|---|---|---|---|---|---|---|---|---|---|---|

| Robust LM-Lag | [5.052238594340731, 3.2343941517611254, 4.6118... | 4.094349 | 4.024191 | (2.808634894260019, 5.659648290833171) | 0.043027 | bayesian_panel_robust_lm_lag | 1 | 600 | 64 | 4 |

| Robust LM-Error | [0.0681264080349474, 0.04361386573776398, 0.06... | 0.055210 | 0.054264 | (0.03787275744298409, 0.07631696357877703) | 0.814234 | bayesian_panel_robust_lm_error | 1 | 600 | 64 | 4 |

SDM/SDEM Variant Tests¶

These tests address the Koley & Bera (2024) decision tree for choosing between SAR/SEM and SDM/SDEM specifications. They require fitting intermediate models (SAR or SLX) as the alternative model.

LM-WX (from SAR null): Should we extend SAR → SDM by adding \(\gamma\)?

Robust LM-Lag-SDM (from SLX null): Should we extend SLX → SDM by adding \(\rho\), robust to \(\gamma\)?

Robust LM-WX (from SAR null): Should we extend SAR → SDM by adding \(\gamma\), robust to \(\rho\)?

Robust LM-Error-SDEM (from SLX null): Should we extend SLX → SDEM by adding \(\lambda\), robust to \(\gamma\)?

We need to fit an SLX panel model first to serve as the null for the robust SDM/SDEM tests.

from bayespecon import SLXPanelFE

slx_panel, summary_slx, effects_slx = fit_panel_model(

SLXPanelFE, formula, panel, W, model=3

)

display(summary_slx)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [beta, sigma]

Sampling 2 chains for 300 tune and 300 draw iterations (600 + 600 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 42759.918 | 1049199.239 | -1873807.937 | 2016072.371 | 28066.187 | 36927.342 | 1396.102 | 515.381 | 1.000 |

| poverty | -0.780 | 0.060 | -0.886 | -0.670 | 0.002 | 0.002 | 1086.079 | 580.483 | 1.000 |

| rev_rating | 0.656 | 0.068 | 0.530 | 0.778 | 0.002 | 0.002 | 814.783 | 489.564 | 1.003 |

| num_spots | 0.376 | 0.082 | 0.230 | 0.545 | 0.003 | 0.004 | 886.088 | 343.284 | 1.002 |

| crowded | -0.480 | 0.064 | -0.587 | -0.340 | 0.002 | 0.003 | 1254.098 | 431.926 | 1.006 |

| W*poverty | -0.117 | 0.156 | -0.416 | 0.166 | 0.004 | 0.007 | 1601.105 | 565.762 | 1.014 |

| W*rev_rating | 0.388 | 0.182 | 0.058 | 0.725 | 0.006 | 0.007 | 825.777 | 444.266 | 1.001 |

| W*num_spots | 0.537 | 0.183 | 0.195 | 0.857 | 0.006 | 0.007 | 1051.800 | 427.714 | 1.003 |

| W*crowded | 0.122 | 0.168 | -0.206 | 0.434 | 0.005 | 0.007 | 1183.283 | 505.092 | 1.003 |

| sigma | 0.906 | 0.043 | 0.835 | 0.986 | 0.001 | 0.002 | 1206.206 | 465.671 | 0.999 |

# SDM/SDEM variant panel LM tests

panel_sdem = pd.DataFrame(

[

bayesian_panel_lm_wx_test(sar_panel).to_series(),

bayesian_panel_robust_lm_lag_sdm_test(slx_panel).to_series(),

bayesian_panel_robust_lm_wx_test(sar_panel).to_series(),

bayesian_panel_robust_lm_error_sdem_test(slx_panel).to_series(),

],

index=["LM-WX", "Robust LM-Lag-SDM", "Robust LM-WX", "Robust LM-Error-SDEM"],

)

panel_sdem

| lm_samples | mean | median | credible_interval | bayes_pvalue | test_type | df | n_draws | k_wx | N | T | J_rho_rho | J_lam_lam | J_rho_lam | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| LM-WX | [9.100588998469215, 9.39443390313249, 11.62304... | 9.736798 | 9.606130 | (8.133820195156323, 12.102793714936915) | 4.510252e-02 | bayesian_panel_lm_wx | 4 | 600 | 4 | 64 | 4 | NaN | NaN | NaN |

| Robust LM-Lag-SDM | [9.038036780488113, 0.024730034616314175, 11.8... | 38.585041 | 13.967509 | (0.014236303127070941, 230.20990907964097) | 5.241915e-10 | bayesian_panel_robust_lm_lag_sdm | 1 | 600 | 4 | 64 | 4 | 53.227309 | 51.563232 | 51.563232 |

| Robust LM-WX | [9.552441638640076, 9.29783736828348, 9.265786... | 9.447910 | 9.410764 | (8.280256498749171, 11.013581825070053) | 5.082865e-02 | bayesian_panel_robust_lm_wx | 4 | 600 | 4 | 64 | 4 | NaN | NaN | NaN |

| Robust LM-Error-SDEM | [6.604972903312357, 0.33595954622340696, 8.598... | 40.051481 | 14.078836 | (0.00920127726979528, 240.63806014568996) | 2.473571e-10 | bayesian_panel_robust_lm_error_sdem | 1 | 600 | 4 | 64 | 4 | 53.227309 | 51.563232 | 51.563232 |

Interpreting the Panel LM Decision Tree¶

The panel LM tests follow the same decision logic as the classical Anselin/Koley-Bera approach, but with Bayesian p-values:

Scenario |

Significant? |

Interpretation |

|---|---|---|

LM-Lag ✓, LM-Error ✗ |

Lag only |

Choose SAR panel |

LM-Lag ✗, LM-Error ✓ |

Error only |

Choose SEM panel |

Both significant → check robust tests |

||

Robust LM-Lag ✓, Robust LM-Error ✗ |

Lag dominates |

Choose SAR panel |

Robust LM-Lag ✗, Robust LM-Error ✓ |

Error dominates |

Choose SEM panel |

Both robust significant |

Both effects |

Consider SDM or SDEM |

Joint LM-SDM ✓ |

SDM preferred over OLS |

Fit SDM panel |

Joint LM-SLX-Error ✓ |

SDEM preferred over OLS |

Fit SDEM panel |

LM-WX ✓ (from SAR) |

WX effects present |

Extend SAR → SDM |

Robust LM-Lag-SDM ✓ (from SLX) |

Lag needed beyond WX |

Extend SLX → SDM |

Robust LM-WX ✓ (from SAR) |

WX needed beyond lag |

Extend SAR → SDM |

Robust LM-Error-SDEM ✓ (from SLX) |

Error needed beyond WX |

Extend SLX → SDEM |

A Bayesian p-value below 0.05 (or a threshold you choose) indicates evidence against the null hypothesis. The credible_interval field shows the posterior uncertainty in the LM statistic itself.

from scipy import stats as sp_stats

# All panel LM tests in a single table

all_panel = pd.concat([panel_standard, panel_robust, panel_sdem])

# Add chi-squared reference values

all_panel["chi2_ref_95"] = all_panel["df"].apply(lambda df: sp_stats.chi2.ppf(0.95, df))

all_panel

| lm_samples | mean | median | credible_interval | bayes_pvalue | test_type | df | n_draws | N | T | k_wx | J_rho_rho | J_lam_lam | J_rho_lam | chi2_ref_95 | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| LM-Lag | [8.849705325258801, 9.698962158359063, 9.30729... | 9.138062 | 9.146584 | (7.624403692259989, 10.580994490594286) | 2.503462e-03 | bayesian_panel_lm_lag | 1 | 600 | 64 | 4 | NaN | NaN | NaN | NaN | 3.841459 |

| LM-Error | [4.444874640106857, 6.70484581272833, 5.316058... | 5.184203 | 5.113080 | (3.1019333231465978, 7.740363607083697) | 2.279312e-02 | bayesian_panel_lm_error | 1 | 600 | 64 | 4 | NaN | NaN | NaN | NaN | 3.841459 |

| LM-SDM Joint | [18.919378904981492, 19.85365968123855, 18.852... | 19.106073 | 19.048788 | (16.890953272562125, 21.771078089045034) | 1.836618e-03 | bayesian_panel_lm_sdm_joint | 5 | 600 | 64 | 4 | 4.0 | NaN | NaN | NaN | 11.070498 |

| LM-SLX-Error Joint | [18.538462451042633, 20.71280141260612, 18.860... | 19.072325 | 19.013449 | (15.954804896109492, 22.231454083674386) | 1.863413e-03 | bayesian_panel_lm_slx_error_joint | 5 | 600 | 64 | 4 | 4.0 | NaN | NaN | NaN | 11.070498 |

| Robust LM-Lag | [5.052238594340731, 3.2343941517611254, 4.6118... | 4.094349 | 4.024191 | (2.808634894260019, 5.659648290833171) | 4.302680e-02 | bayesian_panel_robust_lm_lag | 1 | 600 | 64 | 4 | NaN | NaN | NaN | NaN | 3.841459 |

| Robust LM-Error | [0.0681264080349474, 0.04361386573776398, 0.06... | 0.055210 | 0.054264 | (0.03787275744298409, 0.07631696357877703) | 8.142338e-01 | bayesian_panel_robust_lm_error | 1 | 600 | 64 | 4 | NaN | NaN | NaN | NaN | 3.841459 |

| LM-WX | [9.100588998469215, 9.39443390313249, 11.62304... | 9.736798 | 9.606130 | (8.133820195156323, 12.102793714936915) | 4.510252e-02 | bayesian_panel_lm_wx | 4 | 600 | 64 | 4 | 4.0 | NaN | NaN | NaN | 9.487729 |

| Robust LM-Lag-SDM | [9.038036780488113, 0.024730034616314175, 11.8... | 38.585041 | 13.967509 | (0.014236303127070941, 230.20990907964097) | 5.241915e-10 | bayesian_panel_robust_lm_lag_sdm | 1 | 600 | 64 | 4 | 4.0 | 53.227309 | 51.563232 | 51.563232 | 3.841459 |

| Robust LM-WX | [9.552441638640076, 9.29783736828348, 9.265786... | 9.447910 | 9.410764 | (8.280256498749171, 11.013581825070053) | 5.082865e-02 | bayesian_panel_robust_lm_wx | 4 | 600 | 64 | 4 | 4.0 | NaN | NaN | NaN | 9.487729 |

| Robust LM-Error-SDEM | [6.604972903312357, 0.33595954622340696, 8.598... | 40.051481 | 14.078836 | (0.00920127726979528, 240.63806014568996) | 2.473571e-10 | bayesian_panel_robust_lm_error_sdem | 1 | 600 | 64 | 4 | 4.0 | 53.227309 | 51.563232 | 51.563232 | 3.841459 |

LM-Lag is highly significant (p=0.002) — correctly detecting the spatial lag used in the DGP

Robust LM-Lag remains significant (p=0.039) while Robust LM-Error is not (p=0.66) — pointing to SAR over SEM

Joint LM-SDM and Joint LM-SLX-Error are both significant — suggesting spatial structure beyond OLS

LM-WX from SAR null is not significant (p=0.17) — the DGP had no WX effects, so SAR is sufficient

Robust LM-Error-SDEM is marginally significant (p=0.036) — slight error signal when WX is present

Method-based API¶

Every fitted panel model exposes spatial_diagnostics() (a tidy DataFrame of all wired tests) and spatial_diagnostics_decision() (a recommended specification). The registries are class-aware, so the right tests are run for each model.

ols_panel.spatial_diagnostics()

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:340: RuntimeWarning: X'X (panel LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

ZtZ_inv = _safe_inv(Z.T @ Z, "X'X (panel LM-lag)")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:494: RuntimeWarning: X'X (panel robust LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-lag)")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:586: RuntimeWarning: X'X (panel robust LM-error) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-error)")

| statistic | median | df | p_value | ci_lower | ci_upper | |

|---|---|---|---|---|---|---|

| test | ||||||

| Panel-LM-Lag | 9.138062 | 9.146584 | 1 | 0.002503 | 7.624404 | 10.580994 |

| Panel-LM-Error | 5.184203 | 5.113080 | 1 | 0.022793 | 3.101933 | 7.740364 |

| Panel-LM-SDM-Joint | 19.106073 | 19.048788 | 5 | 0.001837 | 16.890953 | 21.771078 |

| Panel-LM-SLX-Error-Joint | 19.072325 | 19.013449 | 5 | 0.001863 | 15.954805 | 22.231454 |

| Panel-Robust-LM-Lag | 4.094349 | 4.024191 | 1 | 0.043027 | 2.808635 | 5.659648 |

| Panel-Robust-LM-Error | 0.055210 | 0.054264 | 1 | 0.814234 | 0.037873 | 0.076317 |

print("OLS panel recommends:", ols_panel.spatial_diagnostics_decision())

print("SAR panel recommends:", sar_panel.spatial_diagnostics_decision())

OLS panel recommends: digraph {

graph [rankdir=TB]

graph [bgcolor=white]

graph [margin=0]

graph [nodesep=0.45]

graph [ranksep=0.65]

graph [fontname=Helvetica]

fontcolor="#1a1a1a" fontsize=14 label="OLSPanelFE decision tree (alpha=0.05)" labelloc=t

n0 [label="Panel-LM-Lag

p=0.003" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n1 [label="Panel-LM-Error

p=0.023" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n0 -> n1 [label=sig arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

n2 [label="Panel-Robust-LM-Lag

p=0.043" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n1 -> n2 [label=sig arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

n3 [label="Panel-Robust-LM-Error

p=0.814" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n2 -> n3 [label=sig arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

n4 [label="Panel-Robust-LM-Lag p <= Panel-Robust-LM-Error p" color="#dd8452" fillcolor="#fff3cd" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.18,0.10" penwidth=1.2 shape=diamond style="filled,rounded"]

n3 -> n4 [label=sig arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf0 [label=SARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n4 -> leaf0 [label=true arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf1 [label=SEMPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n4 -> leaf1 [label=false arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf2 [label=SARPanelFE color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=box style="filled,rounded,bold"]

n3 -> leaf2 [label="not sig" arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

n5 [label="Panel-Robust-LM-Error" color="#4c72b0" fillcolor="#e8f4fd" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.2 shape=ellipse style="filled,rounded"]

n2 -> n5 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf3 [label=SEMPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n5 -> leaf3 [label=sig arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

n6 [label="Panel-LM-Lag p <= Panel-LM-Error p" color="#dd8452" fillcolor="#fff3cd" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.18,0.10" penwidth=1.2 shape=diamond style="filled,rounded"]

n5 -> n6 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf4 [label=SARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n6 -> leaf4 [label=true arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf5 [label=SEMPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n6 -> leaf5 [label=false arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf6 [label=SARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n1 -> leaf6 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

n7 [label="Panel-LM-Error" color="#4c72b0" fillcolor="#e8f4fd" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.2 shape=ellipse style="filled,rounded"]

n0 -> n7 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf7 [label=SEMPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n7 -> leaf7 [label=sig arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf8 [label=OLSPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n7 -> leaf8 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

}

SAR panel recommends: digraph {

graph [rankdir=TB]

graph [bgcolor=white]

graph [margin=0]

graph [nodesep=0.45]

graph [ranksep=0.65]

graph [fontname=Helvetica]

fontcolor="#1a1a1a" fontsize=14 label="SARPanelFE decision tree (alpha=0.05)" labelloc=t

n0 [label="Panel-LM-WX

p=0.045" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n1 [label="Panel-Robust-LM-WX

p=0.051" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n0 -> n1 [label=sig arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

leaf0 [label=SDMPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n1 -> leaf0 [label=sig arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

n2 [label="Panel-LM-Error

p=0.014" color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=ellipse style="filled,rounded,bold"]

n1 -> n2 [label="not sig" arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

leaf1 [label=SARARPanelFE color="#b58900" fillcolor="#ffd966" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=2.0 shape=box style="filled,rounded,bold"]

n2 -> leaf1 [label=sig arrowhead=vee color="#4c72b0" fontcolor="#1a1a1a" fontsize=10 penwidth=2.5 style=solid]

leaf2 [label=SARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n2 -> leaf2 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

n3 [label="Panel-LM-Error" color="#4c72b0" fillcolor="#e8f4fd" fontcolor="#1a1a1a" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.2 shape=ellipse style="filled,rounded"]

n0 -> n3 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf3 [label=SARARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n3 -> leaf3 [label=sig arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

leaf4 [label=SARPanelFE color="#adb5bd" fillcolor="#f8f9fa" fontcolor="#6c757d" fontname=Helvetica fontsize=11 margin="0.15,0.08" penwidth=1.0 shape=box style="filled,rounded"]

n3 -> leaf4 [label="not sig" arrowhead=normal color="#ced4da" fontcolor="#adb5bd" fontsize=10 penwidth=1.0 style=dashed]

}

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:340: RuntimeWarning: X'X (panel LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

ZtZ_inv = _safe_inv(Z.T @ Z, "X'X (panel LM-lag)")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:494: RuntimeWarning: X'X (panel robust LM-lag) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-lag)")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/bayespecon/diagnostics/lmtests/panel.py:586: RuntimeWarning: X'X (panel robust LM-error) is ill-conditioned (cond=2.53e+14); falling back to pseudo-inverse.

XtX_inv = _safe_inv(X.T @ X, "X'X (panel robust LM-error)")