Bayesian Spatial Models: A Pedagogical Walkthrough¶

This notebook demonstrates all five models currently implemented in bayespecon:

SLX

SAR

SEM

SDM

SDEM

Each section explains the model idea, fits the model, and inspects posterior summaries and spatial effects.

Conceptual Roadmap¶

Let \(W\) denote the spatial weights matrix and \(WX\) the spatial lag of covariates.

SLX: \(y = X\beta + WX\theta + \epsilon\)

SAR: \(y = \rho Wy + X\beta + \epsilon\)

SEM: \(y = X\beta + u\), \(u = \lambda Wu + \epsilon\)

SDM: \(y = \rho Wy + X\beta + WX\theta + \epsilon\)

SDEM: \(y = X\beta + WX\theta + u\), \(u = \lambda Wu + \epsilon\)

In bayespecon, all models accept either:

formula mode:

model_class(formula=..., data=..., W=...), ormatrix mode:

model_class(y=..., X=..., W=...).

import arviz as az

import geodatasets

import geopandas as gpd

import matplotlib.pyplot as plt

import pandas as pd

from libpysal.graph import Graph

from bayespecon import OLS, SAR, SDEM, SDM, SEM, SLX

from bayespecon.diagnostics.bayesfactor import bayes_factor_compare_models

az.style.use("arviz-white")

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/tqdm/auto.py:21: TqdmWarning: IProgress not found. Please update jupyter and ipywidgets. See https://ipywidgets.readthedocs.io/en/stable/user_install.html

from .autonotebook import tqdm as notebook_tqdm

# Load sample spatial dataset

gdf = gpd.read_file(geodatasets.data.geoda.airbnb.url)

xcols = ["poverty", "rev_rating", "num_spots", "crowded"]

ycol = "price_pp"

gdf = gdf.dropna(subset=xcols + [ycol]).copy()

# Build contiguity graph (queen)

W = Graph.build_contiguity(gdf, rook=False).transform("r")

print(f"Observations: {len(gdf)}")

print(f"Predictors: {xcols}")

Observations: 67

Predictors: ['poverty', 'rev_rating', 'num_spots', 'crowded']

Helper Functions

To keep each model section focused, we use utility functions to:

fit with consistent MCMC settings,

print posterior summaries,

tabulate direct/indirect/total effects.

For a quick pedagogical run, we use small draw counts. Increase these for real inference.

def fit_and_report(model_cls, formula, data, W, draws=400, tune=400, chains=4, seed=42):

"""Fit a spatial model and return (model, summary, effects_df).

Uses minimal MCMC settings for pedagogical demonstration.

Increase draws/tune for real analyses.

"""

model = model_cls(formula=formula, data=data, W=W, logdet_method="eigenvalue")

model.fit(

draws=draws,

tune=tune,

chains=chains,

target_accept=0.9,

random_seed=seed,

progressbar=False,

idata_kwargs={"log_likelihood": True},

)

summary = model.summary(round_to=3)

effects_df = pd.DataFrame(model.spatial_effects())

return model, summary, effects_df

Convergence Diagnostics Helper

For each fitted model, we will inspect:

r_hat(target close to 1.00)effective sample sizes (

ess_bulk,ess_tail)trace plots for key parameters.

def diagnostics_table(idata, var_names):

"""Show key MCMC diagnostics for the given parameters."""

cols = ["mean", "sd", "ess_bulk", "ess_tail", "r_hat"]

return az.summary(idata, var_names=var_names, round_to=3)[cols]

def show_trace(idata, var_names, title):

"""Plot trace plots for the given parameters."""

az.plot_trace(idata, var_names=var_names)

plt.suptitle(title, y=1.02)

plt.tight_layout()

plt.show()

Models¶

0) OLS: What could go wrong?¶

Model:

ols = OLS(

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

olsfit = ols.fit(

draws=400,

tune=400,

chains=4,

target_accept=0.9,

random_seed=42,

progressbar=False,

idata_kwargs={"log_likelihood": True},

)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 17 seconds.

ols.summary()

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -9.492 | 85.004 | -163.973 | 154.189 | 3.221 | 2.087 | 694.0 | 835.0 | 1.01 |

| poverty | 0.272 | 0.343 | -0.365 | 0.940 | 0.010 | 0.009 | 1142.0 | 886.0 | 1.00 |

| rev_rating | 0.845 | 0.891 | -0.877 | 2.466 | 0.033 | 0.022 | 709.0 | 817.0 | 1.01 |

| num_spots | 0.119 | 0.023 | 0.079 | 0.162 | 0.001 | 0.001 | 1279.0 | 1066.0 | 1.00 |

| crowded | -2.261 | 0.922 | -4.075 | -0.622 | 0.026 | 0.024 | 1308.0 | 1107.0 | 1.00 |

| sigma | 26.243 | 2.212 | 22.556 | 30.772 | 0.063 | 0.060 | 1227.0 | 927.0 | 1.01 |

1) SLX: Spatially Lagged Covariates Only¶

Model:

Interpretation:

betacaptures local covariate effects.thetacaptures neighbor-covariate spillovers.No spatial lag on \(y\), so no autoregressive propagation through outcomes.

slx, summary_slx, effects_slx = fit_and_report(

SLX,

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

display(summary_slx)

display(effects_slx)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 28 seconds.

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 198.403 | 214.440 | -216.269 | 579.810 | 8.396 | 6.091 | 650.362 | 734.798 | 1.005 |

| poverty | -0.655 | 0.455 | -1.486 | 0.222 | 0.016 | 0.013 | 826.660 | 854.663 | 1.000 |

| rev_rating | 0.265 | 0.911 | -1.323 | 2.049 | 0.031 | 0.030 | 885.762 | 734.053 | 1.009 |

| num_spots | 0.019 | 0.030 | -0.034 | 0.078 | 0.001 | 0.001 | 774.424 | 820.683 | 1.000 |

| crowded | -1.078 | 1.176 | -3.237 | 1.093 | 0.039 | 0.028 | 893.113 | 1080.138 | 1.001 |

| W*poverty | 1.148 | 0.692 | -0.209 | 2.357 | 0.026 | 0.020 | 709.801 | 834.838 | 1.003 |

| W*rev_rating | -1.776 | 1.847 | -4.915 | 2.032 | 0.068 | 0.046 | 731.538 | 815.410 | 1.004 |

| W*num_spots | 0.203 | 0.048 | 0.108 | 0.288 | 0.002 | 0.001 | 766.181 | 782.735 | 1.002 |

| W*crowded | -1.390 | 1.666 | -4.519 | 1.585 | 0.060 | 0.042 | 769.779 | 983.167 | 1.001 |

| sigma | 23.717 | 2.027 | 20.246 | 27.729 | 0.065 | 0.061 | 1021.307 | 614.013 | 1.007 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.655270 | -1.563164 | 0.233338 | 0.14625 | 1.147955 | -0.178487 | 2.545777 | 0.0925 | 0.492684 | -0.524654 | 1.502410 | 0.34625 |

| rev_rating | 0.264813 | -1.446970 | 2.049883 | 0.77250 | -1.776420 | -5.514831 | 1.733292 | 0.3300 | -1.511607 | -5.711824 | 2.936374 | 0.50500 |

| num_spots | 0.019149 | -0.038408 | 0.078050 | 0.52750 | 0.203178 | 0.108604 | 0.297708 | 0.0000 | 0.222327 | 0.159254 | 0.286293 | 0.00000 |

| crowded | -1.078246 | -3.258292 | 1.271095 | 0.36250 | -1.390266 | -4.513828 | 1.821181 | 0.3975 | -2.468513 | -4.821151 | 0.096495 | 0.06000 |

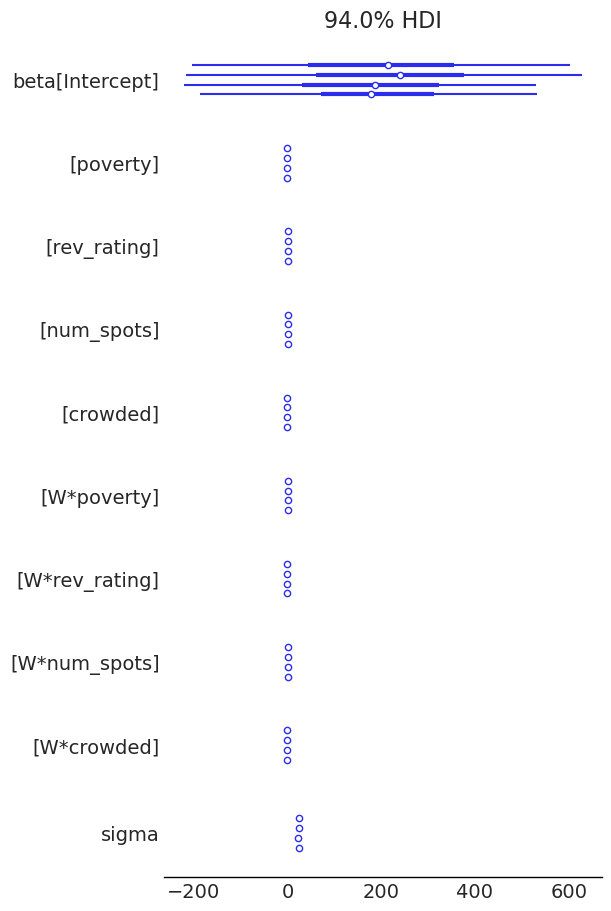

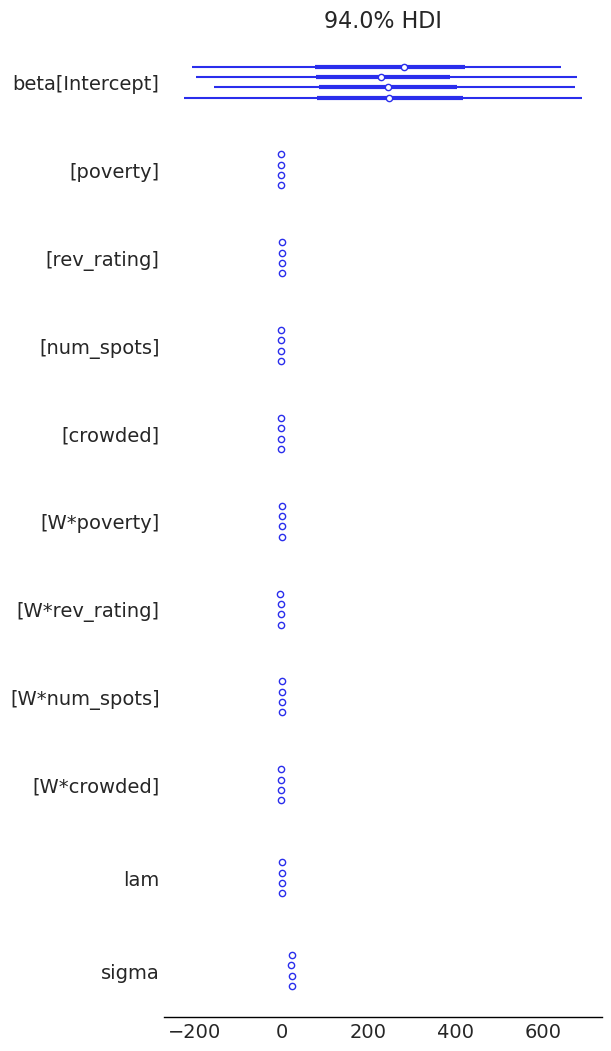

az.plot_forest(slx.inference_data)

display(diagnostics_table(slx.inference_data, ["beta", "sigma"]))

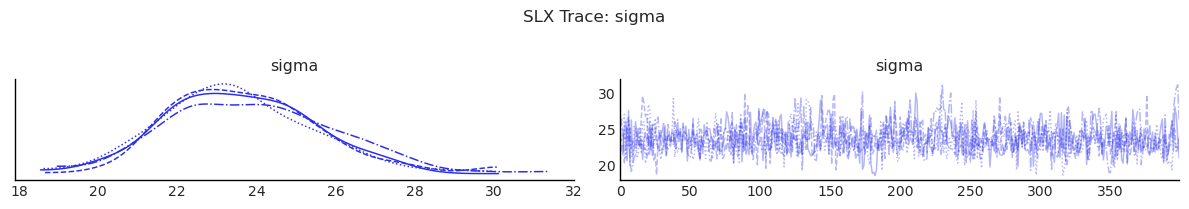

show_trace(slx.inference_data, ["sigma"], "SLX Trace: sigma")

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| beta[Intercept] | 198.403 | 214.440 | 650.362 | 734.798 | 1.005 |

| beta[poverty] | -0.655 | 0.455 | 826.660 | 854.663 | 1.000 |

| beta[rev_rating] | 0.265 | 0.911 | 885.762 | 734.053 | 1.009 |

| beta[num_spots] | 0.019 | 0.030 | 774.424 | 820.683 | 1.000 |

| beta[crowded] | -1.078 | 1.176 | 893.113 | 1080.138 | 1.001 |

| beta[W*poverty] | 1.148 | 0.692 | 709.801 | 834.838 | 1.003 |

| beta[W*rev_rating] | -1.776 | 1.847 | 731.538 | 815.410 | 1.004 |

| beta[W*num_spots] | 0.203 | 0.048 | 766.181 | 782.735 | 1.002 |

| beta[W*crowded] | -1.390 | 1.666 | 769.779 | 983.167 | 1.001 |

| sigma | 23.717 | 2.027 | 1021.307 | 614.013 | 1.007 |

/tmp/ipykernel_7113/3581438262.py:11: UserWarning: The figure layout has changed to tight

plt.tight_layout()

2) SAR: Spatial Lag of Outcome¶

Model:

Interpretation:

\(\rho\) controls feedback from neighboring outcomes.

Effects are amplified through \((I - \rho W)^{-1}\), so direct and indirect impacts differ from raw \(\beta\).

sar, summary_sar, effects_sar = fit_and_report(

SAR,

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

display(summary_sar)

display(effects_sar)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [rho, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 17 seconds.

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -49.276 | 81.126 | -199.623 | 104.468 | 3.013 | 2.155 | 725.708 | 865.937 | 1.006 |

| poverty | 0.102 | 0.299 | -0.423 | 0.706 | 0.008 | 0.006 | 1324.847 | 1219.618 | 1.001 |

| rev_rating | 0.969 | 0.842 | -0.740 | 2.418 | 0.031 | 0.022 | 745.602 | 874.384 | 1.004 |

| num_spots | 0.076 | 0.025 | 0.034 | 0.126 | 0.001 | 0.001 | 1076.119 | 1015.372 | 1.000 |

| crowded | -1.741 | 0.860 | -3.355 | -0.230 | 0.024 | 0.020 | 1295.382 | 873.380 | 1.001 |

| rho | 0.443 | 0.139 | 0.185 | 0.693 | 0.004 | 0.003 | 991.221 | 891.549 | 1.000 |

| sigma | 24.339 | 2.156 | 20.406 | 28.327 | 0.063 | 0.059 | 1175.325 | 1113.562 | 1.003 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | 0.107294 | -0.506917 | 0.740681 | 0.73000 | 0.069012 | -0.503319 | 0.688781 | 0.73375 | 0.176306 | -0.987863 | 1.393020 | 0.73000 |

| rev_rating | 1.035809 | -0.729173 | 2.809097 | 0.24250 | 0.871229 | -0.494138 | 3.531787 | 0.24625 | 1.907038 | -1.180677 | 6.143653 | 0.24250 |

| num_spots | 0.080435 | 0.033317 | 0.130604 | 0.00000 | 0.059244 | 0.015922 | 0.131901 | 0.00375 | 0.139679 | 0.062623 | 0.240899 | 0.00000 |

| crowded | -1.847340 | -3.563650 | -0.176338 | 0.02875 | -1.399176 | -3.965908 | -0.094650 | 0.03250 | -3.246516 | -6.958233 | -0.359249 | 0.02875 |

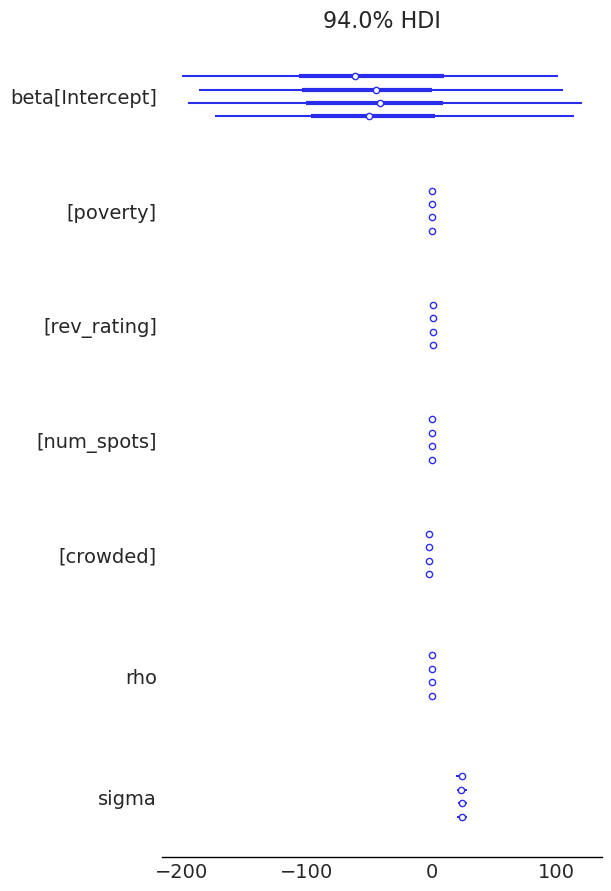

az.plot_forest(sar.inference_data)

display(diagnostics_table(sar.inference_data, ["rho", "beta", "sigma"]))

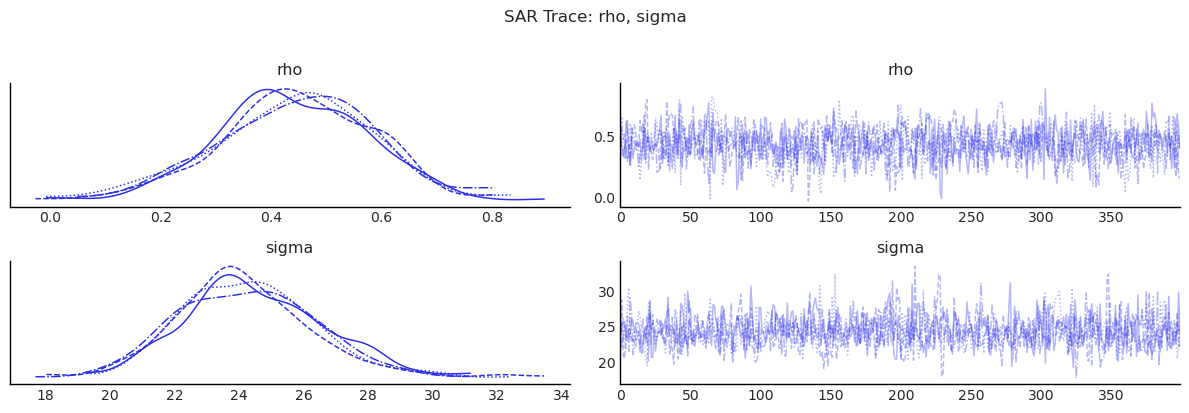

show_trace(sar.inference_data, ["rho", "sigma"], "SAR Trace: rho, sigma")

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| rho | 0.443 | 0.139 | 991.221 | 891.549 | 1.000 |

| beta[Intercept] | -49.276 | 81.126 | 725.708 | 865.937 | 1.006 |

| beta[poverty] | 0.102 | 0.299 | 1324.847 | 1219.618 | 1.001 |

| beta[rev_rating] | 0.969 | 0.842 | 745.602 | 874.384 | 1.004 |

| beta[num_spots] | 0.076 | 0.025 | 1076.119 | 1015.372 | 1.000 |

| beta[crowded] | -1.741 | 0.860 | 1295.382 | 873.380 | 1.001 |

| sigma | 24.339 | 2.156 | 1175.325 | 1113.562 | 1.003 |

/tmp/ipykernel_7113/3581438262.py:11: UserWarning: The figure layout has changed to tight

plt.tight_layout()

3) SEM: Spatial Correlation in Errors¶

Model:

Interpretation:

Spatial dependence is moved to latent shocks rather than outcome feedback.

Useful when omitted spatial factors induce correlated residuals.

sem, summary_sem, effects_sem = fit_and_report(

SEM,

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

display(summary_sem)

display(effects_sem)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [lam, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 14 seconds.

There were 4 divergences after tuning. Increase `target_accept` or reparameterize.

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | -27.196 | 77.808 | -179.249 | 107.308 | 3.122 | 2.412 | 621.425 | 726.421 | 1.008 |

| poverty | -0.111 | 0.449 | -0.914 | 0.770 | 0.015 | 0.010 | 942.050 | 1069.055 | 1.004 |

| rev_rating | 1.119 | 0.826 | -0.317 | 2.691 | 0.033 | 0.025 | 619.239 | 717.234 | 1.008 |

| num_spots | 0.072 | 0.034 | 0.007 | 0.132 | 0.001 | 0.001 | 766.505 | 862.262 | 1.003 |

| crowded | -1.818 | 1.158 | -4.037 | 0.361 | 0.036 | 0.028 | 1039.232 | 972.899 | 1.004 |

| lam | 0.505 | 0.179 | 0.118 | 0.790 | 0.008 | 0.005 | 556.334 | 475.293 | 1.003 |

| sigma | 25.043 | 2.227 | 21.051 | 29.174 | 0.068 | 0.057 | 1065.389 | 1073.024 | 1.002 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.110960 | -1.048006 | 0.741693 | 0.84500 | 0.0 | 0.0 | 0.0 | 0.0 | -0.110960 | -1.048006 | 0.741693 | 0.84500 |

| rev_rating | 1.119062 | -0.503201 | 2.713468 | 0.16125 | 0.0 | 0.0 | 0.0 | 0.0 | 1.119062 | -0.503201 | 2.713468 | 0.16125 |

| num_spots | 0.071912 | 0.005288 | 0.135631 | 0.03875 | 0.0 | 0.0 | 0.0 | 0.0 | 0.071912 | 0.005288 | 0.135631 | 0.03875 |

| crowded | -1.817735 | -4.069739 | 0.496977 | 0.13250 | 0.0 | 0.0 | 0.0 | 0.0 | -1.817735 | -4.069739 | 0.496977 | 0.13250 |

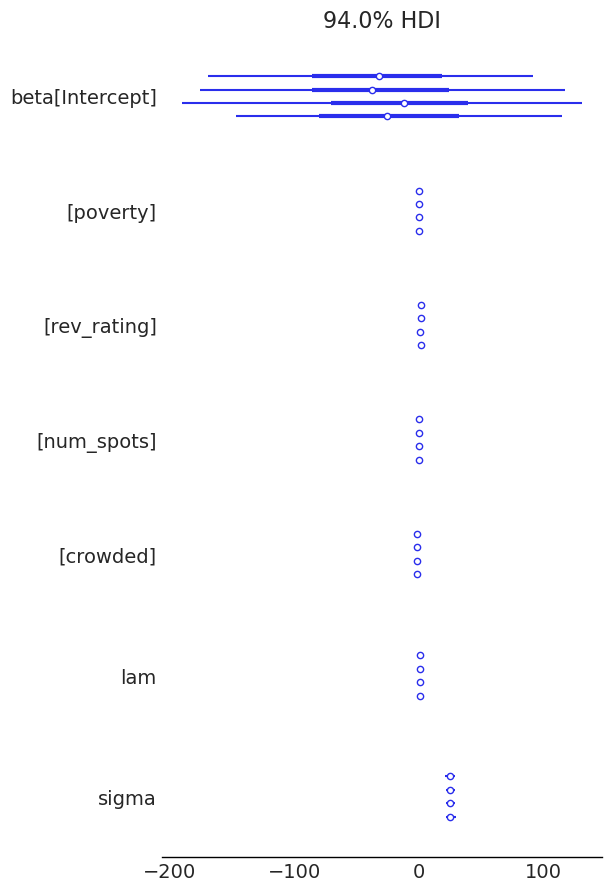

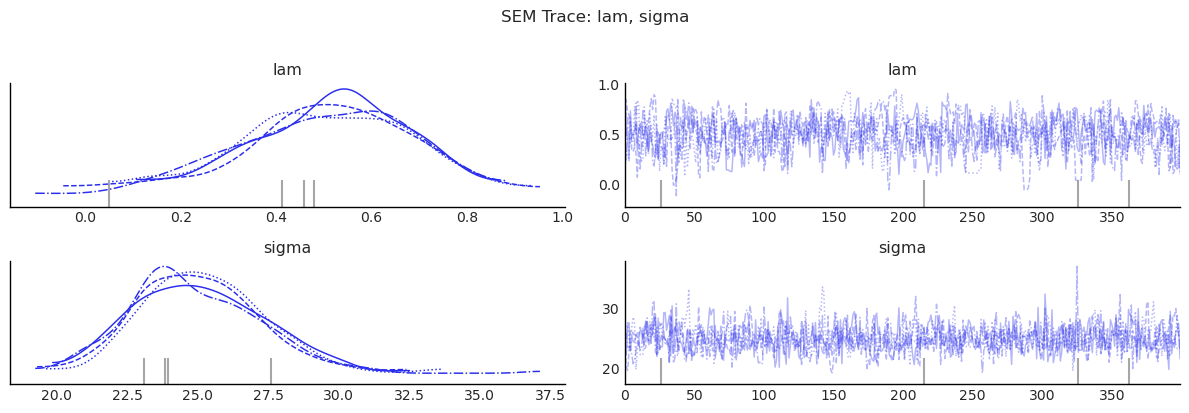

az.plot_forest(sem.inference_data)

display(diagnostics_table(sem.inference_data, ["lam", "beta", "sigma"]))

show_trace(sem.inference_data, ["lam", "sigma"], "SEM Trace: lam, sigma")

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| lam | 0.505 | 0.179 | 556.334 | 475.293 | 1.003 |

| beta[Intercept] | -27.196 | 77.808 | 621.425 | 726.421 | 1.008 |

| beta[poverty] | -0.111 | 0.449 | 942.050 | 1069.055 | 1.004 |

| beta[rev_rating] | 1.119 | 0.826 | 619.239 | 717.234 | 1.008 |

| beta[num_spots] | 0.072 | 0.034 | 766.505 | 862.262 | 1.003 |

| beta[crowded] | -1.818 | 1.158 | 1039.232 | 972.899 | 1.004 |

| sigma | 25.043 | 2.227 | 1065.389 | 1073.024 | 1.002 |

/tmp/ipykernel_7113/3581438262.py:11: UserWarning: The figure layout has changed to tight

plt.tight_layout()

4) SDM: SAR + SLX¶

Model:

Interpretation:

Includes both outcome feedback and neighbor-covariate channels.

Often used as a flexible nesting model for SAR/SLX.

sdm, summary_sdm, effects_sdm = fit_and_report(

SDM,

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

display(summary_sdm)

display(effects_sdm)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [rho, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 30 seconds.

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 197.552 | 203.064 | -179.432 | 571.527 | 7.678 | 5.043 | 702.865 | 979.518 | 1.002 |

| poverty | -0.677 | 0.445 | -1.530 | 0.133 | 0.015 | 0.012 | 895.828 | 803.585 | 1.003 |

| rev_rating | 0.378 | 0.907 | -1.282 | 2.117 | 0.026 | 0.024 | 1209.213 | 1245.381 | 1.000 |

| num_spots | 0.016 | 0.031 | -0.043 | 0.072 | 0.001 | 0.001 | 1185.523 | 1039.527 | 1.002 |

| crowded | -1.085 | 1.121 | -3.363 | 0.781 | 0.040 | 0.027 | 788.075 | 839.000 | 1.000 |

| W*poverty | 1.029 | 0.665 | -0.281 | 2.203 | 0.024 | 0.015 | 799.091 | 959.339 | 1.001 |

| W*rev_rating | -2.025 | 1.808 | -5.366 | 1.271 | 0.065 | 0.043 | 782.291 | 1059.001 | 1.002 |

| W*num_spots | 0.170 | 0.052 | 0.073 | 0.267 | 0.002 | 0.001 | 968.196 | 1082.774 | 1.006 |

| W*crowded | -0.923 | 1.690 | -4.083 | 2.335 | 0.059 | 0.040 | 834.732 | 813.964 | 1.001 |

| rho | 0.230 | 0.168 | -0.096 | 0.529 | 0.005 | 0.004 | 1069.556 | 1056.787 | 1.001 |

| sigma | 23.193 | 1.968 | 19.979 | 27.426 | 0.063 | 0.049 | 1029.107 | 1201.134 | 1.006 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.636300 | -1.511830 | 0.204442 | 0.12625 | 1.074056 | -0.512864 | 2.567043 | 0.17000 | 0.437756 | -0.998291 | 1.783824 | 0.5100 |

| rev_rating | 0.254095 | -1.568348 | 2.156596 | 0.77750 | -2.573229 | -7.978988 | 2.106844 | 0.31125 | -2.319134 | -8.947258 | 3.287885 | 0.4625 |

| num_spots | 0.025322 | -0.035468 | 0.082774 | 0.40250 | 0.220444 | 0.116234 | 0.350051 | 0.00000 | 0.245765 | 0.162914 | 0.361073 | 0.0000 |

| crowded | -1.145194 | -3.223130 | 0.967304 | 0.27250 | -1.474455 | -5.410931 | 2.346805 | 0.44500 | -2.619650 | -6.107759 | 0.632563 | 0.1050 |

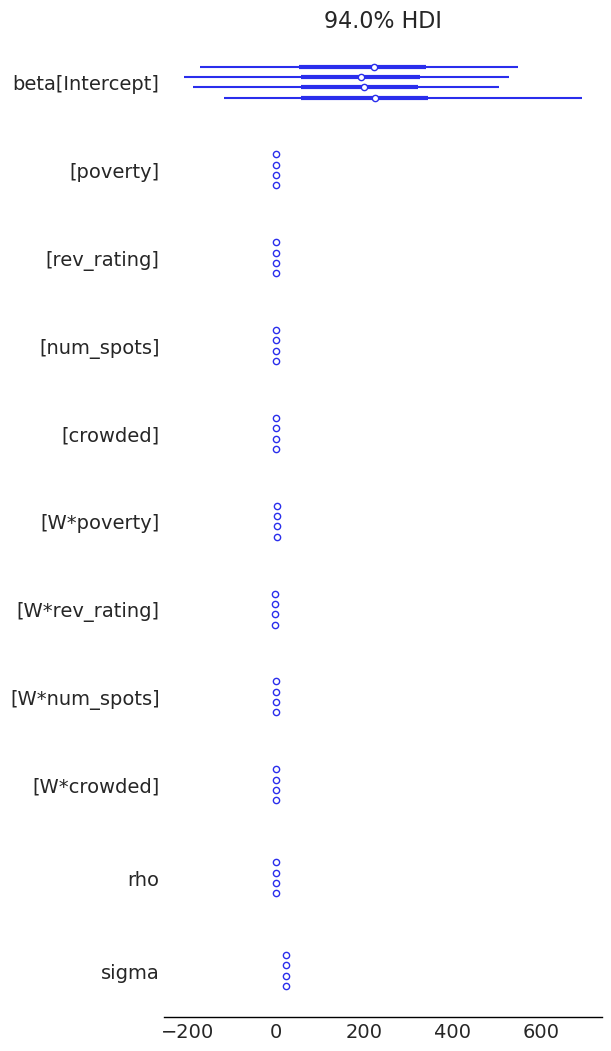

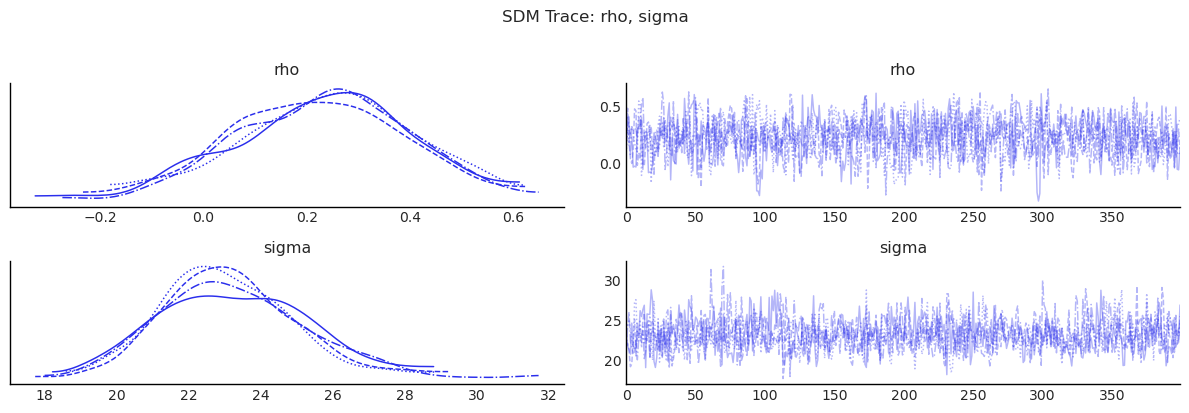

az.plot_forest(sdm.inference_data)

display(diagnostics_table(sdm.inference_data, ["rho", "beta", "sigma"]))

show_trace(sdm.inference_data, ["rho", "sigma"], "SDM Trace: rho, sigma")

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| rho | 0.230 | 0.168 | 1069.556 | 1056.787 | 1.001 |

| beta[Intercept] | 197.552 | 203.064 | 702.865 | 979.518 | 1.002 |

| beta[poverty] | -0.677 | 0.445 | 895.828 | 803.585 | 1.003 |

| beta[rev_rating] | 0.378 | 0.907 | 1209.213 | 1245.381 | 1.000 |

| beta[num_spots] | 0.016 | 0.031 | 1185.523 | 1039.527 | 1.002 |

| beta[crowded] | -1.085 | 1.121 | 788.075 | 839.000 | 1.000 |

| beta[W*poverty] | 1.029 | 0.665 | 799.091 | 959.339 | 1.001 |

| beta[W*rev_rating] | -2.025 | 1.808 | 782.291 | 1059.001 | 1.002 |

| beta[W*num_spots] | 0.170 | 0.052 | 968.196 | 1082.774 | 1.006 |

| beta[W*crowded] | -0.923 | 1.690 | 834.732 | 813.964 | 1.001 |

| sigma | 23.193 | 1.968 | 1029.107 | 1201.134 | 1.006 |

/tmp/ipykernel_7113/3581438262.py:11: UserWarning: The figure layout has changed to tight

plt.tight_layout()

5) SDEM: SLX + Spatial Error¶

Model:

Interpretation:

Neighbor covariates matter directly (through \(WX\)),

and unmodeled shocks are spatially correlated (through \(u\)).

sdem, summary_sdem, effects_sdem = fit_and_report(

SDEM,

formula="price_pp ~ poverty + rev_rating + num_spots + crowded",

data=gdf,

W=W,

)

display(summary_sdem)

display(effects_sdem)

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (4 chains in 2 jobs)

NUTS: [lam, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 4 chains for 400 tune and 400 draw iterations (1_600 + 1_600 draws total) took 26 seconds.

There were 6 divergences after tuning. Increase `target_accept` or reparameterize.

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| Intercept | 250.173 | 240.167 | -213.748 | 661.775 | 11.533 | 6.177 | 437.557 | 910.985 | 1.016 |

| poverty | -0.659 | 0.424 | -1.493 | 0.068 | 0.013 | 0.009 | 1055.612 | 1061.682 | 1.008 |

| rev_rating | 0.244 | 0.966 | -1.639 | 2.071 | 0.036 | 0.022 | 739.208 | 1085.292 | 1.010 |

| num_spots | 0.028 | 0.030 | -0.034 | 0.081 | 0.001 | 0.001 | 1042.504 | 967.294 | 1.002 |

| crowded | -1.096 | 1.102 | -3.145 | 1.056 | 0.033 | 0.024 | 1122.155 | 994.902 | 1.003 |

| W*poverty | 0.954 | 0.695 | -0.356 | 2.230 | 0.025 | 0.016 | 794.642 | 921.693 | 1.003 |

| W*rev_rating | -2.257 | 1.909 | -5.604 | 1.352 | 0.088 | 0.046 | 470.999 | 826.123 | 1.013 |

| W*num_spots | 0.187 | 0.052 | 0.090 | 0.282 | 0.002 | 0.001 | 944.282 | 1022.535 | 1.005 |

| W*crowded | -1.579 | 1.876 | -5.151 | 1.919 | 0.059 | 0.051 | 1026.080 | 1274.800 | 1.001 |

| lam | 0.324 | 0.191 | -0.045 | 0.663 | 0.006 | 0.004 | 987.384 | 1073.611 | 1.005 |

| sigma | 23.302 | 1.983 | 19.909 | 27.125 | 0.056 | 0.050 | 1268.999 | 1167.931 | 1.002 |

| direct | direct_ci_lower | direct_ci_upper | direct_pvalue | indirect | indirect_ci_lower | indirect_ci_upper | indirect_pvalue | total | total_ci_lower | total_ci_upper | total_pvalue | |

|---|---|---|---|---|---|---|---|---|---|---|---|---|

| variable | ||||||||||||

| poverty | -0.659241 | -1.458998 | 0.215810 | 0.12125 | 0.953790 | -0.428991 | 2.285650 | 0.17750 | 0.294549 | -1.077492 | 1.567065 | 0.66125 |

| rev_rating | 0.244189 | -1.765513 | 2.127001 | 0.78250 | -2.257267 | -6.068413 | 1.342616 | 0.25125 | -2.013078 | -7.167563 | 2.545931 | 0.43125 |

| num_spots | 0.027988 | -0.035114 | 0.086002 | 0.32500 | 0.186729 | 0.087106 | 0.290087 | 0.00000 | 0.214717 | 0.124809 | 0.302384 | 0.00000 |

| crowded | -1.096143 | -3.246375 | 1.104345 | 0.32875 | -1.578919 | -5.224640 | 2.135995 | 0.38000 | -2.675061 | -5.847786 | 0.948444 | 0.11125 |

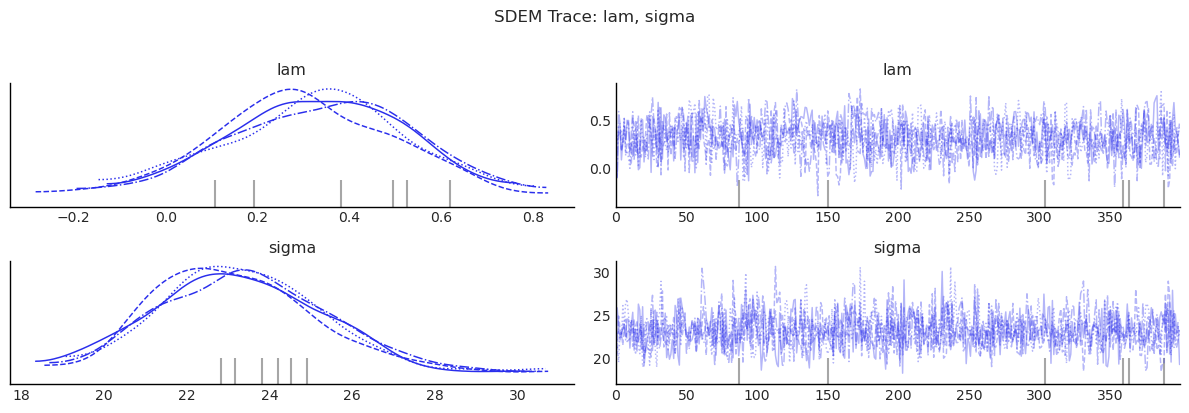

az.plot_forest(sdem.inference_data)

display(diagnostics_table(sdem.inference_data, ["lam", "beta", "sigma"]))

show_trace(sdem.inference_data, ["lam", "sigma"], "SDEM Trace: lam, sigma")

| mean | sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|

| lam | 0.324 | 0.191 | 987.384 | 1073.611 | 1.005 |

| beta[Intercept] | 250.173 | 240.167 | 437.557 | 910.985 | 1.016 |

| beta[poverty] | -0.659 | 0.424 | 1055.612 | 1061.682 | 1.008 |

| beta[rev_rating] | 0.244 | 0.966 | 739.208 | 1085.292 | 1.010 |

| beta[num_spots] | 0.028 | 0.030 | 1042.504 | 967.294 | 1.002 |

| beta[crowded] | -1.096 | 1.102 | 1122.155 | 994.902 | 1.003 |

| beta[W*poverty] | 0.954 | 0.695 | 794.642 | 921.693 | 1.003 |

| beta[W*rev_rating] | -2.257 | 1.909 | 470.999 | 826.123 | 1.013 |

| beta[W*num_spots] | 0.187 | 0.052 | 944.282 | 1022.535 | 1.005 |

| beta[W*crowded] | -1.579 | 1.876 | 1026.080 | 1274.800 | 1.001 |

| sigma | 23.302 | 1.983 | 1268.999 | 1167.931 | 1.002 |

/tmp/ipykernel_7113/3581438262.py:11: UserWarning: The figure layout has changed to tight

plt.tight_layout()

MCMC Sampling Adequacy¶

Bayesian estimation of spatial models has a well-known efficiency

pitfall: the spatial dependence parameter \(\rho\) (or \(\lambda\)) often

mixes slowly, so a chain that looks converged in its point estimate

can still produce posterior credible intervals that are 10–12 % too

narrow because the sampler has not visited the tails enough times

[Wolf et al., 2018]. The

spatial_mcmc_diagnostic helper checks effective sample size, sampler

yield, \(\hat{R}\), and HPDI stability for the spatial parameter and

warns when any threshold is violated.

from bayespecon import spatial_mcmc_diagnostic

# Run on the SDM (most parameters; both rho and impact summaries depend on it)

report = spatial_mcmc_diagnostic(sdm, emit_warnings=False)

report.to_frame()

| ess_bulk | ess_tail | r_hat | mcse_mean | yield_pct | hpdi_drift_pct | adequate | |

|---|---|---|---|---|---|---|---|

| parameter | |||||||

| rho | 1069.556086 | 1056.786799 | 1.00133 | 0.005163 | 66.847255 | 3.827624 | True |

sdm.spatial_diagnostics()

| statistic | median | df | p_value | ci_lower | ci_upper | |

|---|---|---|---|---|---|---|

| test | ||||||

| LM-Error-SDM | 1.020552 | 0.407088 | 1 | 0.312388 | 0.001056 | 5.954121 |

| Robust-LM-Error-SDM | 4.832126 | 4.622864 | 1 | 0.027934 | 0.036720 | 11.323437 |

ols.spatial_diagnostics()

| statistic | median | df | p_value | ci_lower | ci_upper | |

|---|---|---|---|---|---|---|

| test | ||||||

| LM-Lag | 22.079712 | 10.588424 | 1 | 0.000003 | 0.015565 | 103.588908 |

| LM-Error | 4.040401 | 3.188498 | 1 | 0.044423 | 0.717507 | 12.567629 |

| LM-SDM-Joint | 3649.701192 | 1572.442319 | 5 | 0.000000 | 25.179629 | 18701.613847 |

| LM-SLX-Error-Joint | 3651.437896 | 1575.318771 | 5 | 0.000000 | 25.791326 | 18706.073180 |

| Robust-LM-Lag | 12.028354 | 11.394298 | 1 | 0.000524 | 5.623500 | 21.461866 |

| Robust-LM-Error | 5.906351 | 5.595007 | 1 | 0.015086 | 2.761339 | 10.538541 |

sar.spatial_diagnostics_decision()

sem.spatial_diagnostics()

| statistic | median | df | p_value | ci_lower | ci_upper | |

|---|---|---|---|---|---|---|

| test | ||||||

| LM-Lag | 178.159853 | 58.530743 | 1 | 0.000000 | 0.106393 | 1024.410783 |

| LM-WX | 27369.491190 | 6859.925190 | 4 | 0.000000 | 68.184423 | 154209.397357 |

| Robust-LM-Lag | 1.972060 | 0.910857 | 1 | 0.160229 | 0.002683 | 9.301223 |

| Robust-LM-WX | 924.248356 | 446.509084 | 4 | 0.000000 | 12.548655 | 4578.160719 |

report

SpatialMCMCReport(parameters=['rho'], ess_bulk={'rho': 1069.556086208102}, ess_tail={'rho': 1056.7867993879127}, r_hat={'rho': 1.0013301817996323}, mcse_mean={'rho': 0.005163045813358309}, nominal_size=1600, yield_pct={'rho': 66.84725538800637}, hpdi_drift_pct={'rho': 3.8276241815940053}, warnings_triggered=[], adequate=True, adequate_by_param={'rho': True})

Model Comparison¶

# Collect all fitted models for comparison

model_dict = {

"OLS": ols,

"SLX": slx,

"SAR": sar,

"SEM": sem,

"SDM": sdm,

"SDEM": sdem,

}

idata_dict = {name: m.inference_data for name, m in model_dict.items()}

Bayes Factor Model Comparison¶

Bayes factors provide an alternative to information criteria for comparing competing models. While WAIC and LOO assess predictive performance, Bayes factors compare marginal likelihoods — the probability of the data under each model, integrated over all parameter values weighted by the prior:

This makes Bayes factors sensitive to the prior: models with diffuse priors on unnecessary parameters are penalized more heavily, because the marginal likelihood averages over all possible parameter values weighted by the prior. In spatial models, this means that models with many WX coefficients under wide priors (e.g., SLX, SDM, SDEM) can receive substantially lower marginal likelihoods than more parsimonious alternatives (e.g., OLS, SAR, SEM) — even if their log-likelihoods are similar.

Interpreting Bayes factors (Kass & Raftery, 1995):

BF range |

Evidence strength |

|---|---|

1 – 3 |

Anecdotal |

3 – 10 |

Moderate |

10 – 30 |

Strong |

30 – 100 |

Very strong |

> 100 |

Extreme |

Method. We use bridge sampling (Meng & Wong, 1996) to estimate each model’s log marginal likelihood, following the R bridgesampling package (Gronau et al., 2020). The implementation uses ESS weighting, two-phase convergence, and MCSE diagnostics. For reliable estimates, 40,000+ posterior samples are recommended.

Important caveat. Bayes factors can be very sensitive to prior specification. Models with diffuse priors on many parameters will tend to have lower marginal likelihoods. This is by design — it is the Bayesian Occam’s razor at work — but it means that Bayes factors and information criteria may disagree when priors are uninformative.

bayes_factor_compare_models(model_dict, method="bridge").round(3)

/tmp/ipykernel_7113/3295012235.py:1: UserWarning: Bridge sampling with 1600 posterior samples for 'OLS' may yield imprecise marginal-likelihood estimates. A conservative rule of thumb is 40,000+ samples (Gronau, Singmann, & Wagenmakers, 2017).

bayes_factor_compare_models(model_dict, method="bridge").round(3)

| OLS | SLX | SAR | SEM | SDM | SDEM | |

|---|---|---|---|---|---|---|

| OLS | 1.000 | 6.390332e+20 | 0.066 | 0.179 | 1.016243e+21 | 5.730165e+20 |

| SLX | 0.000 | 1.000000e+00 | 0.000 | 0.000 | 1.590000e+00 | 8.970000e-01 |

| SAR | 15.209 | 9.719181e+21 | 1.000 | 2.730 | 1.545624e+22 | 8.715118e+21 |

| SEM | 5.571 | 3.560225e+21 | 0.366 | 1.000 | 5.661763e+21 | 3.192428e+21 |

| SDM | 0.000 | 6.290000e-01 | 0.000 | 0.000 | 1.000000e+00 | 5.640000e-01 |

| SDEM | 0.000 | 1.115000e+00 | 0.000 | 0.000 | 1.773000e+00 | 1.000000e+00 |

bayes_factor_compare_models(model_dict, method="bic").round(3)

| OLS | SLX | SAR | SEM | SDM | SDEM | |

|---|---|---|---|---|---|---|

| OLS | 1.000 | 0.310 | 0.124 | 1.648 | 0.906 | 1.454 |

| SLX | 3.222 | 1.000 | 0.400 | 5.310 | 2.920 | 4.684 |

| SAR | 8.051 | 2.499 | 1.000 | 13.268 | 7.296 | 11.704 |

| SEM | 0.607 | 0.188 | 0.075 | 1.000 | 0.550 | 0.882 |

| SDM | 1.103 | 0.342 | 0.137 | 1.818 | 1.000 | 1.604 |

| SDEM | 0.688 | 0.213 | 0.085 | 1.134 | 0.623 | 1.000 |

Bridge Sampling vs. BIC¶

BIC: SAR > SLX > OLS > SEM (SAR wins, SLX is moderate, SEM is worst)

Bridge: SAR > SEM > OLS (SAR wins, SEM is moderate)

The two methods can produce qualitatively different model rankings. This is expected and reflects a fundamental difference in what they assume about priors:

BIC approximates \(\log(ML) \approx \hat\ell_{\max} - \frac{k}{2}\log(n)\), which assumes unit-information priors (priors containing as much information as a single observation). The penalty per parameter is fixed at \(\frac{1}{2}\log(n) \approx 2.1\) for \(n = 77\).

Bridge sampling integrates over the actual priors in the model. When priors are wide (e.g.,

Normal(0, 100)on WX coefficients), the marginal likelihood penalizes each such parameter by roughly \(\log(\sigma_{\text{prior}} / \sigma_{\text{post}})\), which can be 5–10× larger than the BIC penalty.

This is why models with many WX terms (SLX, SDM, SDEM) may look reasonable under BIC but receive extreme Bayes factors under bridge sampling: the wide priors on the WX coefficients are “wasted” — they spread probability mass over implausible parameter values, reducing the marginal likelihood. This is Bayesian Occam’s razor at work, and bridge sampling is generally more trustworthy because it accounts for the actual prior specification.

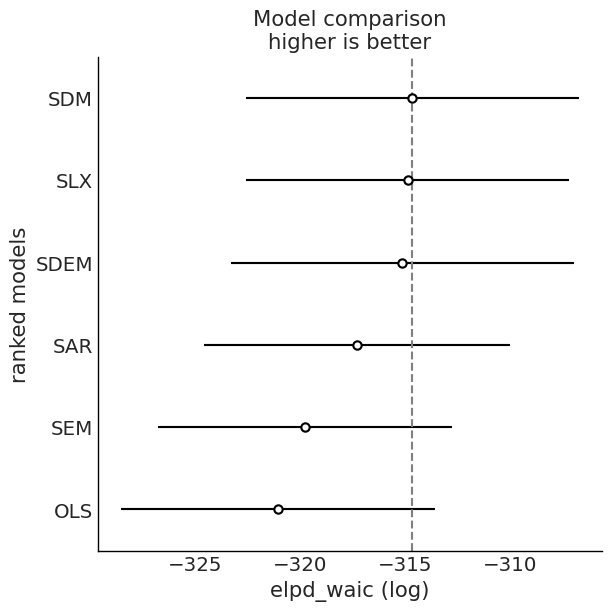

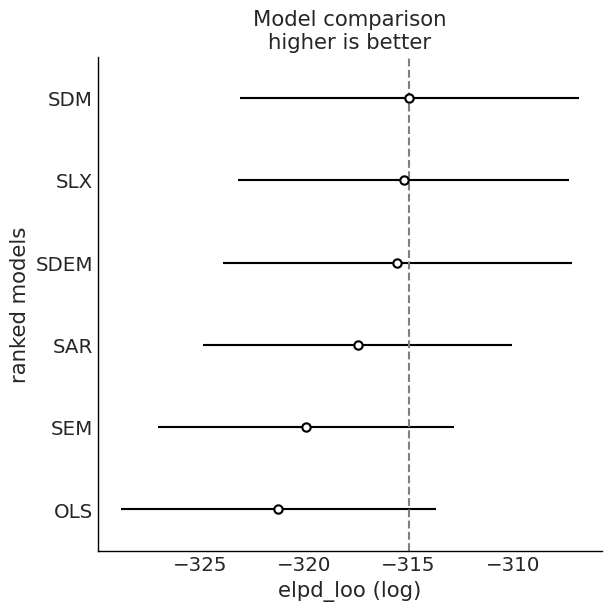

Model Comparison: WAIC and LOO¶

We compare fitted models using information criteria from ArviZ.

# WAIC and LOO comparison

for ic in ("waic", "loo"):

cmp = az.compare(idata_dict, ic=ic, method="BB-pseudo-BMA")

az.plot_compare(cmp)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:1652: UserWarning: For one or more samples the posterior variance of the log predictive densities exceeds 0.4. This could be indication of WAIC starting to fail.

See http://arxiv.org/abs/1507.04544 for details

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.69 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/arviz/stats/stats.py:782: UserWarning: Estimated shape parameter of Pareto distribution is greater than 0.69 for one or more samples. You should consider using a more robust model, this is because importance sampling is less likely to work well if the marginal posterior and LOO posterior are very different. This is more likely to happen with a non-robust model and highly influential observations.

warnings.warn(

Bayesian LM Specification Tests¶

The bayespecon.diagnostics module provides Bayesian Lagrange-multiplier tests that operate on posterior draws rather than point estimates. These replace the frequentist LM tests that were previously available.

Below we run the Bayesian LM tests from the fitted OLS model:

bayesian_lm_lag_test— tests \(H_0: \rho = 0\) (no spatial lag)bayesian_lm_error_test— tests \(H_0: \lambda = 0\) (no spatial error)bayesian_lm_wx_test— tests \(H_0: \gamma = 0\) (no WX, from SAR null)bayesian_lm_sdm_joint_test— joint test for SDM (\(H_0: \rho = 0\) and \(\gamma = 0\))bayesian_lm_slx_error_joint_test— joint test for SDEM (\(H_0: \lambda = 0\) and \(\gamma = 0\))bayesian_robust_lm_lag_test/bayesian_robust_lm_error_test— Anselin–Florax robust pair (SAR vs SEM)bayesian_robust_lm_lag_sdm_test/bayesian_robust_lm_wx_test/bayesian_robust_lm_error_sdem_test— Neyman-orthogonal robust testsbayesian_lm_error_sdm_test/bayesian_lm_lag_sdem_test— SDM/SDEM-aware tests using correctly filtered residuals

In practice, prefer the method API: model.spatial_diagnostics() runs the tests wired to that model class, and model.spatial_diagnostics_decision() returns a recommended specification.

Each returns a BayesianLMTestResult with posterior summary statistics and a Bayesian p-value.

# `spatial_diagnostics()` runs all tests wired to a model class

# and returns a tidy DataFrame.

ols.spatial_diagnostics()

| statistic | median | df | p_value | ci_lower | ci_upper | |

|---|---|---|---|---|---|---|

| test | ||||||

| LM-Lag | 22.079712 | 10.588424 | 1 | 0.000003 | 0.015565 | 103.588908 |

| LM-Error | 4.040401 | 3.188498 | 1 | 0.044423 | 0.717507 | 12.567629 |

| LM-SDM-Joint | 3649.701192 | 1572.442319 | 5 | 0.000000 | 25.179629 | 18701.613847 |

| LM-SLX-Error-Joint | 3651.437896 | 1575.318771 | 5 | 0.000000 | 25.791326 | 18706.073180 |

| Robust-LM-Lag | 12.028354 | 11.394298 | 1 | 0.000524 | 5.623500 | 21.461866 |

| Robust-LM-Error | 5.906351 | 5.595007 | 1 | 0.015086 | 2.761339 | 10.538541 |

Compare Spatial Parameters Across Models¶

This cell compares posterior means/intervals for the spatial scalar where present:

rhoin SAR/SDMlamin SEM/SDEM

# Compare spatial parameters across models

spatial_rows = []

for name, model, var in [

("SAR", sar, "rho"),

("SEM", sem, "lam"),

("SDM", sdm, "rho"),

("SDEM", sdem, "lam"),

]:

if var in model.inference_data.posterior:

summary = az.summary(model.inference_data, var_names=[var], round_to=3)

summary.insert(0, "model", name)

spatial_rows.append(summary)

pd.concat(spatial_rows)

| model | mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|---|

| rho | SAR | 0.443 | 0.139 | 0.185 | 0.693 | 0.004 | 0.003 | 991.221 | 891.549 | 1.000 |

| lam | SEM | 0.505 | 0.179 | 0.118 | 0.790 | 0.008 | 0.005 | 556.334 | 475.293 | 1.003 |

| rho | SDM | 0.230 | 0.168 | -0.096 | 0.529 | 0.005 | 0.004 | 1069.556 | 1056.787 | 1.001 |

| lam | SDEM | 0.324 | 0.191 | -0.045 | 0.663 | 0.006 | 0.004 | 987.384 | 1073.611 | 1.005 |

Matrix-Mode API Example (Optional)¶

The package also supports direct matrix inputs. This is useful if your design matrix is already engineered.

y = gdf[ycol]

X = gdf[xcols]

sar_matrix = SAR(y=y, X=X, W=W)

sar_matrix.fit(draws=200, tune=200, chains=2, random_seed=7, progressbar=False)

display(sar_matrix.summary(round_to=3))

Initializing NUTS using jitter+adapt_diag...

Multiprocess sampling (2 chains in 2 jobs)

NUTS: [rho, beta, sigma]

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

/home/runner/micromamba/envs/test/lib/python3.14/site-packages/pymc/step_methods/hmc/quadpotential.py:316: RuntimeWarning: overflow encountered in dot

return 0.5 * np.dot(x, v_out)

Sampling 2 chains for 200 tune and 200 draw iterations (400 + 400 draws total) took 5 seconds.

We recommend running at least 4 chains for robust computation of convergence diagnostics

The rhat statistic is larger than 1.01 for some parameters. This indicates problems during sampling. See https://arxiv.org/abs/1903.08008 for details

| mean | sd | hdi_3% | hdi_97% | mcse_mean | mcse_sd | ess_bulk | ess_tail | r_hat | |

|---|---|---|---|---|---|---|---|---|---|

| poverty | 0.051 | 0.295 | -0.426 | 0.640 | 0.015 | 0.016 | 366.145 | 219.495 | 1.014 |

| rev_rating | 0.453 | 0.106 | 0.258 | 0.649 | 0.006 | 0.004 | 314.644 | 315.514 | 1.011 |

| num_spots | 0.080 | 0.023 | 0.038 | 0.120 | 0.001 | 0.001 | 350.772 | 254.789 | 1.005 |

| crowded | -1.719 | 0.812 | -3.283 | -0.198 | 0.045 | 0.036 | 322.926 | 278.203 | 1.003 |

| rho | 0.432 | 0.120 | 0.220 | 0.661 | 0.006 | 0.005 | 367.146 | 287.289 | 1.008 |

| sigma | 24.275 | 2.208 | 20.730 | 28.992 | 0.117 | 0.155 | 363.195 | 219.473 | 1.013 |